|

|

|

14:25 |

|

|

transcript

|

1:03 |

Welcome welcome welcome, to modern API's with FastAPI. I hope you're super excited about FastAPI. I am absolutely excited to teach it to you. To me, this is the most exciting Python web framework I've seen in a very long time. FastAPI builds on all the great work that frameworks like Flask have put in place, these simple micro-framework models, or styles of building web applications. But it does it with a special flair, a special touch of all the modern Python features. So you'll see that FastAPI is a web framework for building Python web applications, that's high performance, easy to learn, fast to code, and ready for high end production. Of all the new frameworks out there, I am certainly most excited about FastAPI, and I think as you go through this course, you're gonna be excited about using it as well. Easy to work with, but at the same time you get to use all the cool modern features of Python, meaning fewer errors, faster to code and so on. I'm looking forward to us getting started with FastAPI together.

|

|

|

transcript

|

3:25 |

Why is there so much excitement around FastAPI? Well, let's go through just some of the things that make it special. FastAPI is one of the fastest Python web frameworks available. In fact, it's on par with the performance of things like Node.js and Go, and that's largely because it actually runs on top of something called Starlette, a lower level web framework, and uses Pydantic for data exchange and data validation. So, when you think FastAPI, you can think fast to execute, fast to run. But that's not the only fast that matters, is it? One of the most important ways to be fast is to create applications fast and not spend a lot of time building them. So it's also fast to code because FastAPI does many of the mundane type of things that you would do in a web application, automatically for us, and some estimates have put it the speed of using FastAPI over things like Flask and Django at 2 to 3 times faster. That's because we can do things like just a API method and say it takes some kind of class, one of these Pydantic models, and it will automatically look and make sure all the stuff that that class says it must have is there, the types the class says it must take are either there or automatically convertible to there, so you have a class that's a location, it has a city and a state, well, it better have a city and a state, and those are gonna be strings that can be converted over and so on. So a lot of the work around like validation, data exchange, documentation, all of that is largely automatic, so you're not gonna have to spend time writing that code. Also, when you don't write code and stuff happens automatically for you, that means you might not be writing bugs. So there's also about 40% less chance of an error because you don't have to write as much validation and data exchange. Also, because of the type hints, it deeply embraces this idea of teaching the editors what type of data is being exchanged. You're passing rich objects to the API methods, all the various parts of the API itself, the framework itself, have type hints, so editors know what to do and give you automatic completion everywhere, less time in the documentation, less time debugging. It's also designed to be easy to use and learn. If you know Flask, you will be pretty close to knowing FastAPI actually, and there's not a whole lot of code you have to write. So not too much to write, as well as not too much to learn, less time reading docs, also, because of the great editor support. When we create our API's, we'll put parameters in there saying "it takes this kind of data, returns that kind of data, and it has this type". Because of all the automatic stuff that's happening for us, you'll see the framework is actually doing multiple things, like ensuring the variable is there, doing automatic type conversion, and so on. So again, less code duplication, fewer bugs. And, it comes with a production ready web server built on Async and await, so you get the highest performance production grade servers, you can just drop right into production. One of the challenges with building HTTP based API's is there's zero documentation. What's supposed to be exchanged? Who knows? Just go out there and read the docs and play with it. But with FastAPI, it automatically uses what was known as "swagger", OpenAPI, to generate documentation for all the API methods, what data is exchanged through JSON schema, and things like that. So it's really, really nice that we get automatic documentation by simply writing our program. Nothing else. So, these are just some of the reasons to be excited about FastAPI, and we're gonna explore all of them throughout this course.

|

|

|

transcript

|

2:05 |

The title of this course is "Modern API's with FastAPI". And that's no coincidence. Let's talk about some of the modern Python features that FastAPI uses to allow us to create nice, modern, Python API's. We've talked about type hints. Type hints are used all over the place. We can say, here's an API method, It takes an integer and an optional integer, It will automatically do that conversion. But with Pydantic, It actually takes this much farther as well as throughout the whole framework it has type hints on its types so you don't have to go to the documentation to figure out what's happening. It's async and await first. There's basically nothing you have to do to make FastAPI async friendly or async capable. All you gotta do is write async a few methods and use async and await within your implementation and you're done. The web server it comes with is one of these ASGI servers, so it automatically knows how to run async enabled frameworks perfectly. So you get great scalability anytime you're waiting on an external system; be that a database, another web service call, a cache, any of those types of things. Also, it takes the idea of data classes and pushes them even a little bit farther with Pydantic classes. These are ways to describe how your data is supposed to be exchanged in terms of classes with type hints, and then do that exchange and conversion automatically with Pydantic. And then the documentation is generated automatically for us and conforms to the OpenAPI documentation standard. The API's are super easy to test, so testing your code with pytest is very, very straightforward, especially because all the strongly typed data exchange that you see living in the actual API definition. So you can just call those functions and off it goes. And also because of the type hints, we get rich editor Support. Editors like PyCharm and VS Code know how to read type hints and then give us all the auto complete information we need so we're not diving around in the docs and asking questions "what's available here?", you can just look in the editor and just keep on coding. Here's a bunch of the reasons, or a bunch of the aspects of modern Python that are leveraged in FastAPI.

|

|

|

transcript

|

3:13 |

One of the questions people always ask is "Which Web framework should I use for building an API? Should I use flask? Should I use Django? Should I use Django REST framework?" like, all these different things. So let's take a moment and look at FastAPI versus other frameworks, real briefly. Well, the big two have got to be Django and Flask. Django has Django REST framework. Flask has some REST-ful extensions, you can add to it. But here's the thing, FastAPI is an API framework first. It's an async framework first. It automatically creates documentation with openAPI documentation, all those things. So it's built specifically to be what it is, an API framework, where as Django, yes it also does API's, but that's not it's main goal. Flask also does API's, but it's not got all that infrastructure there to help you make it go fast. Oh yeah, and both Django and Flask don't fully embrace the async and await style programming. At the time of this recording, Django is working on it, Flask has plans that are not really there yet. So, if you want a modern API and you're starting from now, you're starting from scratch, your starting now, why not choose the most modern framework that has some momentum behind it? We also might compare it to Pyramid or Tornado. Pyramid, I love Pyramid. I think it's a great framework, but it does not, and has no plans to, support async as far as I know. Also, it's more on the web, less on the API framework side. Pyramid's great. There's a lot of things I like about it and I actually created some open source libraries to bring those features that I like about Pyramid to FastAPI, but that sort of not exactly the main topic of this course. But Pyramid, nice. Tornado, Tornado is interesting because it's one of the few frameworks that embraces the async and await style of programming. But FastAPI is the modern version of Python's async. And also there's other specific API frameworks, right? So Django, Flask, all these, they're about building Web apps. They can also build API's. Hug, for example, built on Falcon, is one of these frameworks that's specifically about building API's. "Embrace the API's of the future", the happy Koala hugging bear things says. And Hug is actually nice, but the guy who created Hug actually talked about his admiration for FastAPI and how it's really, really special. Let's look at one more aspect of these here. Programming is not exactly a popularity contest, but when things are popular, that means there's more momentum behind it, there's more other tools to go with it, there's more tutorials and whatnot, so let's just look at the number of GitHub stars around each of these. So, Django: 54,000, Flask: 53,000, Pyramid: 3,000, Tornado: 20,000, Hug: 6,000. You look at FastAPI, It's at 22, 23,000 right now. What's interesting though, you might say, Well, "it's less popular than Django and flask", Yeah, it's only been around a year and a half, and Django and Flask have been around like 10 years. So, that tells you a sense of just how popular FastAPI is. It's way popular more than all the other ones below that have been around a while. But even for the big hitters, it's like halfway there, 1/7, 1/6 the time it's been around, so really, really exciting. There's so much momentum behind FastAPI and I do think it's because of this modern aspect that we've talked about.

|

|

|

transcript

|

2:29 |

Let's take just a moment and talk about what we're going to cover in the course. Instead of going chapter by chapter by chapter, just these are the bullet points of what we're gonna talk about. I just want to set the stage for the big ideas we're gonna cover and we're gonna weave them in and out throughout the various chapters. We're going to start by building a simple API, and I really want you just get a sense of like, here's the essence of FastAPI before we get into any of the details, so right away we're just gonna build a simple API. And then we're gonna explore these language features, these modern Python language features. What is Pydantic? What is async and await and how do you use it? All those things, type annotations, type hints, we're gonna explore the language features so you're in a position to absolutely take advantage of all of them as we go through the rest of the course. We're gonna talk about Pydantic in particular because it's such an important way to model the data exchange and the data validation. So we're gonna focus big time on Pydantic. Also, one of the things that FastAPI doesn't make immediately obvious is how do I actually have a web page? So if I build an API, it has this data exchange and not everything is even executable within a browser, potentially. You could have something that requires a post, or a delete HTTP verb, which is hard to make the browser do without a little bit of a plug-in or something, right? You just can't click the links. You'll see that we can use the same templating language as Flask has, Jinja, to actually write and serve static HTML or dynamic HTML even and then static files. So you could add a little bit of the functionality of what Django or Flask brings by default. You could do that in here is well, we're gonna focus on making that possible. So instead of having two different frameworks, one for your API and something for the Web page part, you could just do it all in FastAPI. After we get all this set up and in place, what we're gonna actually do is build a much richer application that we're gonna build throughout the rest of the course. We're gonna focus on building a rich, full featured API that takes advantage of data exchange, data validation, async and await, calling external services, all that kind of stuff. And then we're going to round out the course by taking that full featured API that we're going to build and deploying it out on the Internet on a virtual machine. So we'll take you through all you gotta do to set up Linux to run production grade FastAPI applications out there on the Internet in some cloud host. So much exciting stuff here, really looking forward to going through it all with you and so these are a lot of neat ideas, and we're gonna weave them through the course as we go through the content.

|

|

|

transcript

|

0:48 |

What do you need to know to take this course? Well, simply put basic Python. So we're assuming that you know how to write core Python. You can create functions, you can work with strings. We do talk about the advanced modern Python language features and how to put those into web applications. So we don't necessarily need you to know those, but you should have some familiarity with Python itself because we don't start from absolute the beginning and some basic understanding of HTTP. How HTTP works, how to exchange HTML, what HTTP verbs and so on. We do touch on that as well, but again, we don't go super deep into it. We just sort of talk about the aspects. So these are the two expectations that we assume. Maybe you can get away with less with a little extra research, but hopefully you know a little bit of Python at a minimum to take this course.

|

|

|

transcript

|

0:39 |

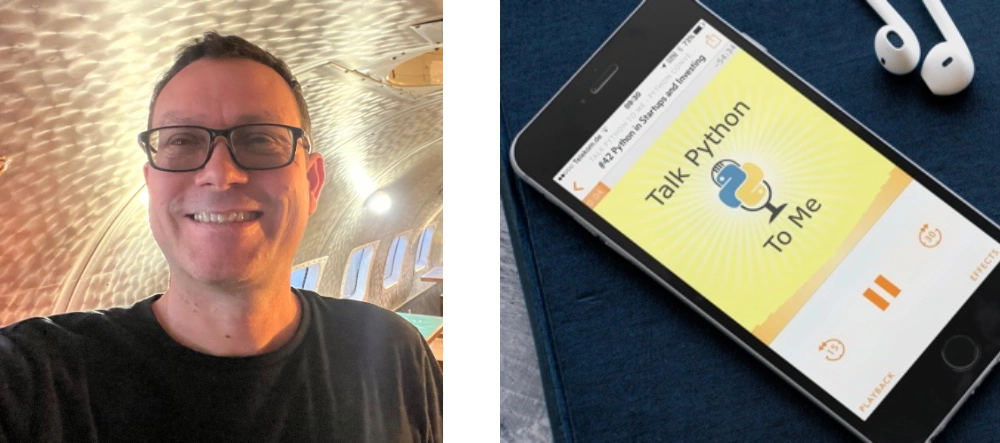

You might have been wondering, Who is this guy talking to you? It's Michael. Hey, I'm Michael Kennedy. Nice to meet you. Here I am. You can find me on Twitter where I'm @mkennedy. You may know me from the Talk Python To Me podcast or Python Bytes podcast I host with Brian Okken. I'm also the founder and one of the principal authors over at Talk Python Training. So I've been doing a lot of work in the Python space for a long time and all these different outlets give me a good view into what is important and what is happening in the Python space, and FastAPI is definitely coming up more and more often as an important part of the ecosystem throughout all of these areas. Great to meet you. Looking forward to doing this course with you.

|

|

|

transcript

|

0:43 |

Finally, before we get your machine set up and dive in to writing code and fully covering FastAPI, I just wanna let you know, I recently did a podcast interview with the creator of FastAPI, Sebastian Ramirez, over at Talk Python. So, it was called "Modern and FastAPI's with FastAPI" recorded october 4th 2020. So, if you wanna listen to the creator and me have a conversation about how it compares to these other frameworks, why he created it, and a whole bunch more, just visit "talkpython.fm/284" and listen to it, you know, throw it on while you're driving somewhere in the car, doing some housework, It'll definitely get you motivated to go through the rest of this course and take advantage of this cool thing that Sebastian built.

|

|

|

|

4:50 |

|

|

transcript

|

2:17 |

In this short little section, what we're gonna do is talk about what you need to get your machine set up to follow along. And throughout this course, I strongly encourage you to build the application you see being built during the course and to play around with slight variations of that. In order to do that, you're gonna need a few things set up on your system. First of all, would it surprise you to know that you need Python to build a Python Web framework, or build apps with the Python Web framework? Of course not. But specifically, you're going to need at least Python 3.6, so if you have something less than 3.6 it's just not going to run. FastAPI has a minimum Python requirement of Python 3.6 and honestly, the newer the better. This course, we're gonna be using Python 3.9 throughout the course. You might wonder, well, I think I have Python, but not sure, do I? You can check on Linux and macOS. You can type "Python3 -V" and get Python 3.8, 3.9, hopefully something new. If that's below 3.6 or it doesn't come back with anything, You gotta get Python. Over on windows, It's a little more complicated because sometimes there's a Python and Python3 command, sometimes there's just a Python command. Python is your best bet, so type "Python -V" and if you get output that says "Python like Python 3.8, 3.9", you're good to go. But if you run this and you get no output and yet it doesn't crash, that means that Python is not actually installed. There's this shim that Microsoft has put into Windows that if you just type Python alone it will launch the installer to go find it on the Windows store and potentially install it. But they've made this oversight where if you pass command line arguments to it, all it does is nothing, doesn't launch the installer, doesn't say I'm not really Python, It doesn't give a version. So be really careful. If it does nothing, you don't actually have Python. But if it gives you some output like this, you're good to go. Finally, if you need help installing Python, check out "realPython.com/installing-Python" they've got a guide that they're keeping up to date over there. Personally, On macOS, I use Homebrew to install Python 3, 3.9 currently, and then on Windows I use chocolatey, but you can use whatever systems you want or whatever mechanisms, There's actually a lot of ways to do so, so check it out of here at "realPython.com/installing-Python"

|

|

|

transcript

|

1:41 |

We're going to pick an editor and use it throughout this course, as you can imagine, we gotta have an editor of some sort. I'm going to use PyCharm. It's absolutely my favorite editor for working with Python projects, especially larger ones like the one that we're gonna work with here with different types of files and so on, on the Web. Now, PyCharm comes in both a free and a pro edition. The free one is free and open source, The pro one is paid, it's not too much for a personal version, but many people don't want to buy PyCharm, and that's totally fine. There's no need to. You can 100% use the free community edition for this course, and it'll be fine. There's literally four lines of code that you're gonna see me write or would have to write that with the community edition you will not get auto-complete, and with a professional edition, you get limited auto-complete. That's the only difference. So no problem about using the free version. If you do want PyCharm, I recommend you get it through the toolbox app. If you install the jetbrains toolbox, you can install multiple apps, it automatically updates them, you can cycle between versions, there's a lot of cool things, it auto updates, a lot of cool things that happen by installing this. So, if you're gonna go with that path, go with the toolbox. Finally, if for some reason you don't want to use PyCharm, the other really good editor these days is Visual Studio Code. Now, when you get Visual Studio Code, it doesn't come by default ready to work on Python. But if you go to the extensions by clicking that little box and you get Python right there, click "Install Python" and also install PyLance after that and then you'll be in good shape. Those two addons make working with Python code in VS Code quite good.. Not PyCharm good, but pretty close. So pick one of those two editors and make sure you're set up and ready to use it, you're gonna follow along.

|

|

|

transcript

|

0:52 |

Finally, make sure you get the source code and you take it with you. Over here on GitHub at "github.com/talkPython/modern-apis-with-fastapi" dashes between it, is where the source code is. You can clone this repository with Git, if you're a friend of Git, but if you're not familiar with Git or you don't have installed or just don't use it for some reason, No worries. Just see that green button? Click that, there's a way to download a zip file, you'll get the same thing. So, make sure you go over here. If you do have a GitHub account, star it and consider forking it so that you have it there to take with you. Many of the things we're starting from scratch and building on the screen, and everything you see me type on the screen will be saved in GitHub. But every now and then, you might want to just jump into the middle and grab some of the code to work with. So, this is the easiest way to do that, just get it off GitHub. Once you get the source code, be sure to follow along, you'll get a lot more out of the course that way.

|

|

|

|

30:58 |

|

|

transcript

|

0:50 |

It's time to write our first FastAPI API. We're going to create a simple application that will actually show us a ton of the moving parts of FastAPI. Now, because I want to just focus on getting everything set up, I'm gonna first have us build a simple little calculator API. You'll be able to submit a couple of numbers that will do some simple math. The programming will be as basic as it gets. But what will not be basic will be all the things around FastAPI we're doing: the types being passed in, the validation, the required fields, the error reporting, all of those types of things. We're going to focus on that and then going to come back for a second iteration and build a much more interesting and complicated API that would be, well, more realistic. But let's start by building a really cool calculator app.

|

|

|

transcript

|

3:49 |

Now for us to build our application, we need to create a new web project. Now, almost every web project that I know of depends on external libraries and anytime you have a Python library or Python application that depends on external libraries, you're gonna want to start by creating a virtual environment. And of course, this is no different. So that's what we're gonna do. here we have the demos, and right now we only have chapter three. Of course, all the chapters will be here by the time you're watching the course. Let's go over here, this will let me just pop open a terminal right there. And what we're gonna do is we're gonna create a virtual environment. So I'm gonna say "Python3 -m venv venv" and now we're gonna activate it. So on Windows, you would activate it just by saying "venv/scripts/ activate" But on Mac and Linux, you say dot, to apply it to this shell, then "venv/bin" why is it bin not scripts? Take that up with someone else. I have no idea. But we're gonna activate it like this and you'll see our prompt change either way, where it says now you're in this virtual environment. We can ask things like "which Python"? Yep, it is the one that we're working with. Another thing we wanna look at is by default, whenever we create a new virtual environment, there's a 95% chance that pip itself will be out of date. So let's just go ahead and upgrade that real quick as well. I'll just go and do these things next time and not run you through it, but first time through, I want to talk about it. Alright, everything looks like it is good and we have our virtual environment. Let's go ahead and just come over here and open this in PyCharm. Now on macOS, you could drag it onto the icon. on the other OS's, you just go to PyCharm, say "file, open directory" or visual studo code, and open that directory as well. Alright, so notice down here, it says "no interpreter". There's a chance that it might pick the right one. Let's go and see which one it's after. This seems to always change, it's super frustrating, and the way it works, like Sometimes it works and finds the local one we created, sometimes it doesn't. This time it didn't. So we're gonna say go to our home directory, go to venv, bin or scripts, pick Python. Okay, now it looks like everything is working. It's gotta read through Python real quick just to make sure it understands all the types and then we'll be ready to get going. Next up, let's go and create a "main.py" That's pretty common in FastAPI to have a main that we're going to run, and I'm just going to right click and say "run" to make sure everything's working. Okay, the last thing we need to do is we're gonna need to be able to use FastAPI. And if I go and run this again, then it's not so happy about it, right. The last thing we have to do is install FastAPI, and we're going to keep track of our dependencies by having a "requirements.txt", and in here, we're gonna put "fastapi", for now, we're gonna have a bunch more later, and of course it's suggesting it could install it for us, but I'm just going to go to the terminal and show you what we would run more generally would say "pip install -r requirements.txt" with the virtual environment active, we get all the dependencies of FastAPI at this time. Alright, It looks like everything is good. I think it believes it's misspelled, which is unfortunate, but you could tell it to stop showing you that. And let's just do a print, "hello FastAPI" and run this. Alright, Perfect. So it looks like we've got our system set up, ready to run Python or running Python 3.9 at the moment. If you're unsure which version you got, you can come down here. We have 3.9.0 at the moment, but again, anything from 3.6 or beyond should be fine for what we're doing and were able to install and import FastAPI. So I think our app is ready to, well, begin writing it actually.

|

|

|

transcript

|

5:17 |

Well, this was fun, but "hello FastAPI" is not exactly what we were hoping. We were hoping to build a web application that our programs and other services could talk to. So let's go and build that. Now, if you've ever worked with Flask, FastAPI is sort of a Flask derivative style of API. It's not the same, but a lot of your intuition will work for it there. So what we're gonna do is going to create, either call it "app", or I've also seen it called "api", let's go with "api". We'll come over here and say "fastapi" and want to create a instance of FastAPI like that. We'll drop that magic there. And then we're going to define some function. This is gonna be an API endpoint. So we're gonna have a calculator, and we'll just say "calculate" like that and let's say it's gonna do something incredible like return two plus two. And then in flask you would go over here and say "run", but that's not what happens here. What we need to do is provide an external server and there's a really awesome production level high performance one recommended here, and we're going to use "uvicorn". Now, notice there's an error here because uvicorn is not necessarily included when we install FastAPI. So that's the next thing, to go over here, again It's not misspelled. This time, I'll just press the button and let it do the install. You'll see it happen down at the bottom, and we're good. Now that's up there. And so then we just say "uvicorn run" and we pass it the api. That's it. Except how does it know that calculate has anything to do with this, and what URL should we use anyway? So the last thing we're gonna do is come over here and say "api dot" now be careful. If you've been doing flask, you might type route. That's fine. But what you really wanna probably say is, I wanna only respond to get requests, http get requests, and then we'll pass over, you can see there's a few options here, a lot of stuff going on, but our initial usage is simple, we're just gonna want to do a get against "api/calculate" like this. Alright, now, let's try to run it and see what happens. So let's just open this up and see what we get. Now, this is not the most encouraging response here. What is the response? Not found. Well, that's because nothing is listening on just the forward slash, like the basic url. So we're gonna have to go to "api/calculate" We can fix this, like opens as a crash sort of thing in a minute but, calculate, spelling is hard, but once you get it right, yes, look at that, 4, the answer is 4, and maybe we want to respond with some sort of JSON, right? That's how API's are. So we could come over here and we could say "result =" let's just say, here we go, we're gonna calculate the value then we're gonna store it into this thing that we're gonna return, and actually, let's go ahead and inline that right there, so we'll just straight up return that value, that dictionary, there. So we'll do our work and then we're going to return the dictionary. Run it again, and now you can see if we go look at the raw data we have proper JSON. So when you're talking to API's, it probably makes sense to have some kind of schema, some kind of data structure rather than returning just the number four or some string. Not always true, but generally it's a good idea. So we'll just start doing that here and we'll build on that, of course, as we go along throughout this course. So that's it. Let's just review real quick what we've got to do. We import FastAPI, we create an instance of the API, and on there we use that to decorate the functions, in this case we say we have a "calculate" function and it's going to handle requests to "/api/calculate" and right now, it doesn't take any arguments. We're gonna work on that. But right now, it just says, "Well, okay, you want some calculations? How about two plus two? That's cool". And we'll pass that back. Then we're gonna return this dictionary, which is automatically converted to JSON, and also, if you look over here and we go to the network and we do this request, and we look at this one, you can see that the response content type assumes that it's application/json. So it says this is JSON, which is all the more reason that the actual thing that we get over here should be JSON, not just the number four. So it automatically returns this as JSON if we passed the dictionary there by default, and then in order for our application to start, we're gonna come down here and say "uvicorn, run this application". And we could also add in here "port", I'll explicitly call out what is the default value so you can see if you want to adjust them. Here we go. That way if you saw, even if we put like a 1 here it'll listen on port 1 and so on, or 8001. Cool. Alright, so this is simple, right? This is incredibly simple to build a high performance api and get started, we just have to have a project, set up the dependencies FastAPI and uvicorn, create a simple method and call uvicorn run. Done. You've built an API. Pretty awesome, right?

|

|

|

transcript

|

0:45 |

Now that we've built our first FastAPI endpoint, let's just review what it takes to get a minimal API endpoint up and running in an application. Pretty simple. So we're gonna import FastAPI to use FastAPI and then we have to have a server to run it, so we're gonna use uvicorn, and we create an instance of the FastAPI object, calling it "api", create a function, some kind of method that we're going to call, and we're gonna decorate it with http verbs and their configuration. So an http get against api/calculate will run this right here. And then we just say "uvicorn.run" we pass it the API object, we say the host and we say the port and that's it, we're off to the races. We have our app up and running.

|

|

|

transcript

|

3:09 |

You can see that working with http verbs: get, post, put, and so on, all of those things are right up in front when we work with building API's with FastAPI, and understanding what verbs are out there and when they should be used is really important when you're building REST-ful API's. So let's just take a moment and talk about the four most common http verbs. There are others that we're not gonna mention here, you can go Look at this link at the bottom to see them all. The one that everyone who has interacted with a computer on the web is familiar with is "get". So get is just give me the information of this resource. Like, anytime you're using a browser, most things you're doing are just click a link, do it get. so the get requests a representation of a specified resource, in our case the result of that calculation, and it should only be used to retrieve data. One of the really important ideas around get is that it's supposed to be potentially cacheable, whether or not you access it multiple times or whatever, it shouldn't change the state of the system. There was a really interesting bug in Wikipedia way, way, way back in the early days when there was a delete button, actually had a just a get request to delete a resource, and then the web spiders like Google went through and started trying to index Wikipedia and started following those links and started deleting the pages. So, yeah, don't do that kind of stuff. Get should just return things. Think of get as "read only". If you wanna change something, if you want to modify something, use "http post". This is to submit some new object, some new data, which is going to cause a change in the server, potentially. So if you're gonna log in, you're gonna create an account, you might submit your username and your password that you want to create the account with. And of course, the state that's being changed on the server as well now there's a new account with that username and password that you can log in. Delete, similar to post in that it modifies things, but if I want to remove a resource, so if I had like a bookstore and I had "/books/book_7" and we decided, you know, we don't want book seven anymore, then the API could set it up so I do a delete request against that URL, against "books/book_7" and the server could respond by deleting book seven, no longer there. Also similar to post is we have put. Now with post you say "I have some data, I want you to create it on the server". Probably what comes back is "Great, I created this, and here is where it lives". But if you already know where you want it to be in terms of the URL, think of like a blog post, a blog post you might already know the url where you want your blog post to live, but it doesn't yet exist, you could do an http put and say "I would like to make this location have this blog post", right? You don't let the server control where the thing gets created, you say explicitly "server Put it here". This is not nearly as common as post, but sometimes you'll see it used. These are the four most common http verbs as far as writing API's and you know explicitly making them part of your API. There are a bunch of others, like I said, at the bottom, you can check out, but get for read only, post for making changes, delete to delete, put if you know where it's going.

|

|

|

transcript

|

2:14 |

http verbs are what the client is instructing the server to do and telling what the server to do. But the server has an ability to talk back to the clients say "yes, that worked" or "no, that wasn't okay because of this", or "you don't have permission". So the way the server communicates that is with http status codes. So if you are not super up on your http status codes, you're gonna need to really get those a few of those figured out to see exactly what you should tell all of your clients. So a really good place to go is "httpstatuses.com" statuses not status. There we go, http status codes. Now, This is a really cool site because it doesn't just have them grouped and categorized, but it has details. So before you saw we got a 200 OK when we did a request for our calculate. If you click on this, you get all sorts of information about when you should do that, how you should do it okay, so get represents, it's a good thing to return for a get If here's a proper representation. The payload sends a 200 response. It depends on the request method. The meaning is basically "that worked" or this is the same as get but no data and so on. Now, if you come down here, you can even see, like, some of the places you might use it. There's like constants in Python, like "http.HTTPstatus.OK" And you can use those if you like. You can also come down here and we could see created. This is much more common for a http post. You know, once you've done a post like hey, that worked, I created it, And then you're going to say where it was. So all the 200's, these are good. Over in the 400 section is another place where you definitely wanna look. So bad request. Bad request is awesome because it means you passed some data, like invalid data, or some other thing that's not good, so we're not going to process that. We come down here and say "you don't have permission to do it", or "this data was unacceptable", so on. And then finally, the ones we hope we don't see too much of are the 500 internal server errors. So this is like the server crashed or some part of our infrastructure couldn't get to another part, NGINX Couldn't talk to Gunicorn, one of those types of things. Definitely look through here when you're thinking about what should I send back to answer this request, especially if there's something that went wrong. What should I send back? "httpstatuses" is a great place to go Look.

|

|

|

transcript

|

2:59 |

So our calculator API is working. If we open it up down here, let's see what we got. Remember, we have a not found, but if we go to "api/calculate" we're getting this data back and it's 4. Well, how exciting and how generally useful is this calculator? Not at all, right? All we're ever going to get is 4. So the answer is always 4. Maybe it should have been 42, but 4 it is. So let's go and change this so we can pass some data in. Now let's say we take an "x, y, and z", those are the three things we could pass in, and the value is gonna be "x + y" like, for now we'll do times, maybe we'll do divide, It will let us do something slightly more interesting in just a moment. So we could go over here, and if we run this again, we come up and say "?x=2&y=3&z=10" What are we going to get here? Are we going to get, what did we say? X plus y is 5, and then times 10 it should be 50, are we gonna get 50? Let's find out. No, we got nothing. So we cannot multiply sequence by a string. Wait a minute. What's going on here? Let's take away the Z for a minute. Try that again. 23, 23 as a string. If you look at it real careful, notice right here, there are quotes, that is not an integer in JSON, that is a string. So what's the problem? The problem is, everything passed around on the Web is strings by default. But something needs to say "no, no, no. We expect this to be an integer". So if I go over here and I change this and I use type hints, you say this is an integer and this is an integer and we say this is an integer. Let's do this again. Also notice it didn't say anything about any of these values being required. But now, let's try it again. Well check it out. We got the value 5, and if we look at the raw data, it doesn't have quotes, it's really 5. And let's go back here and put our times z, run it again, Yes, we got 50. Okay, this is working pretty well. What happens if I omit Z? Look at that. We got an error message and if we actually look exactly what we got, it says "there's something wrong with the query string" and there's a Z that's supposed to be in there. But there's no Z in the query string, but we expected one, and the problem is the Z is required and the type of errors is that it's missing. So let's put our Z back. Okay, so you're gonna see when we pass these over, If there's no default value and there's no optional value, things like that, these become required. But we could say over here that this is a 10. Here we go. Now, we don't have to specify the Z because there's a default value for it. But if we do for the omit the y where there is no default value then hey, guess what? y is missing. We gotta pass it over. Alright, cool. So this is how we pass data at least from the query string as well as you would see the path, like we could do like X, Y like this and make that part of the URL, doesn't have to be the query string, and you would be doing this exactly the same way. Okay, so this is how we pass data over to our API method.

|

|

|

transcript

|

1:12 |

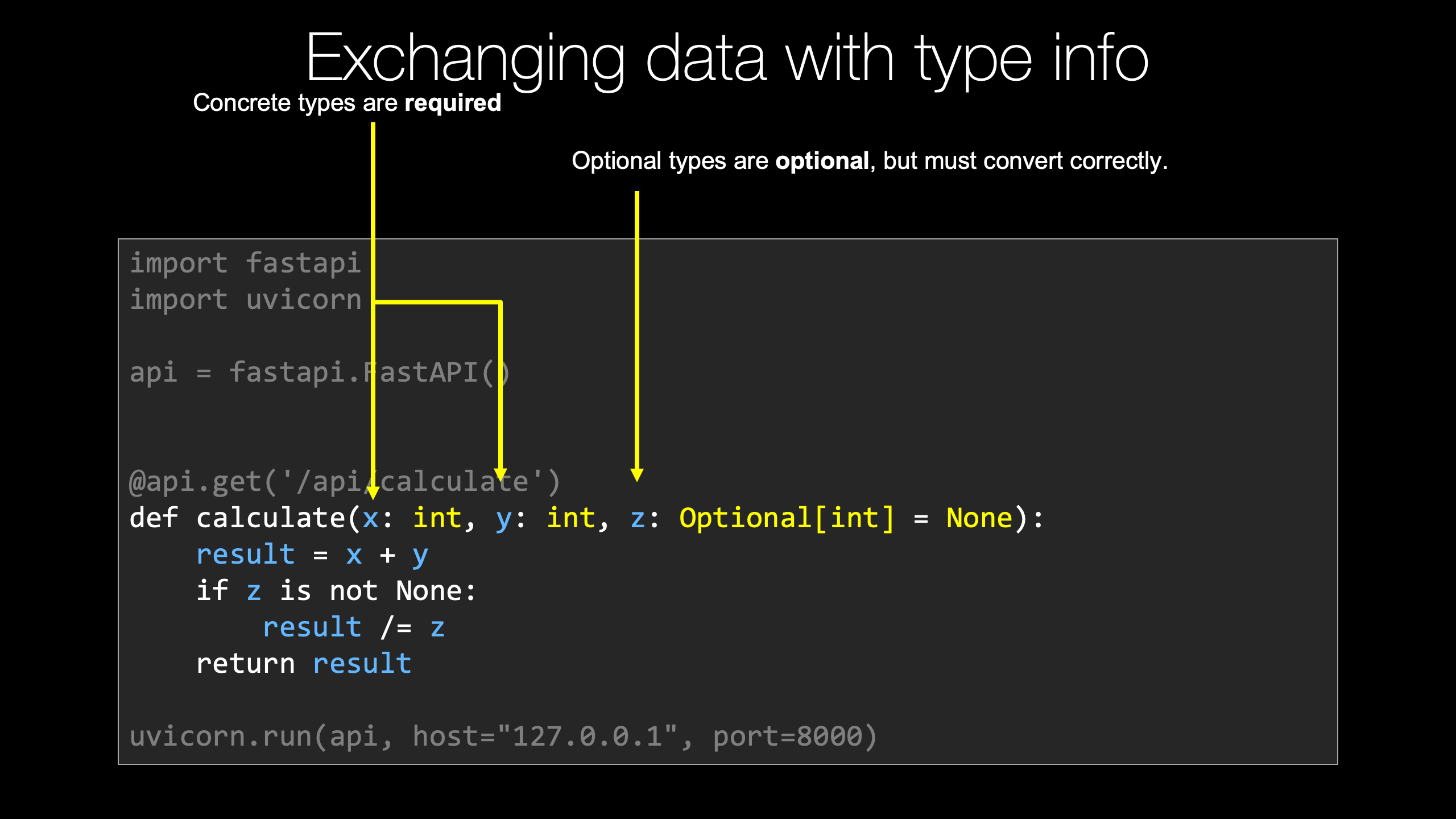

We saw that if we want to pass data, especially from the query string or part of the URL path definition, where we can put variables in there, what we have to do is create the same variables, or arguments, in our function. So here our calculate function now takes an X, Y and Z. Now we're going to specify these to have concrete types. So over here, these concrete types that have no default value these are required. We can also specify an optional integer and set it to be none. That way, we know for sure that no value was passed for Z over here. This value is optional, we could pass none or just completely omit it, and it's going to come through with its default value of none. But if we do pass some value for Z, just like X and Y, it has to be a valid integer. I don't think we saw that in the example, but if we had passed like ABC for X it would say "No, no, no, the type is wrong". X has to be an integer and we can't get an integer out of the string ABC. So we get this automatically converted to integers, and the types are validated that what we pass over are either completely missing or if they are there, they could be converted to the types that we said they should be, including Z, which is optional. It either has to be missing or it can be an integer.

|

|

|

transcript

|

5:33 |

We saw that by default our API method is going to return 200. That was great. And you can actually control it up here by setting the status code to say, like, 201 for some kind of post or something along those lines. But what if we change this a little bit and we put a divide here and let's go ahead and change this to an optional, we gotta import that, oops not like that, import it like this, an optional integer, and by default, there's nothing there. If we're going to return, or we're going to compute this, how well do you think dividing say the number 5 by none is gonna turn out? Not so well. And let's go a little step further and let's actually kind of echo back the arguments as we saw them, right? They pass something we want to say "this is what we got from what you sent us". We'll put our X, Y, and Z here, so we're gonna need to do some sort of test, right? Value is gonna be X plus Y. And then we'll say, if Z is not none, you might say if not Z, but yeah, if it's not Z, and if it's equal to zero, maybe we want to respond some other way. So if it's not none, we're going to say "value /=" you know, divide value by Z. They're going to return it. Let's try this. Alright, we do our request. Right now, Let's put a Z back first, Z equals 10, like it was before. Perfect. So we got 2 + 3 is five. Divide it by 10 is 0.5, everything's great. Well, now that Z is in there, what if we say we omit Z, that's okay, it doesn't crash, it just doesn't do the calculation. But what if we put in Z equals zero? That explicitly is not good. It's not that the Z was required, It's just that this value of Z is bad data. So how are we going to communicate that back to them? So let's go over here and right at the beginning, we'll do a test. we'll say, look, if you passed in a Z and it's exactly the number zero, well, this value is just not gonna work for us, right? How do we communicate that? Could you come over here and say "error: Zero Z"? right? return that. We return that. Well, yes, we technically could return it, but what status code is it going to send? What message is it going to communicate to the client? 200. Everything's okay. So yeah, not really. Like we could do this, but it is not going to communicate well with the API endpoints. Like especially think of a program trying to talk to this API, how is it going to know which is which, Right? So what we want to do is return a "fastapi.response" and then we could just set two things, we can set the content to "Error: Z cannot be zero", and then the status code, here's our http statuses, And let's just say 400 bad request, right? So we're gonna come in here and say, Look, if you give us zero, this is totally bad. We cannot continue. It's gonna crash. But we don't want our server to crash. We want to say the reason this has gone wrong is the data you supplied, not 500 server error, which we saw up here, internal server error, this looks like the service broke, not like they gave us bad data. It's also very unhelpful to them. So if we just rerun this now we get an error. And more importantly, more importantly, if we look at the network, 400 bad request, right, 400 bad request. But if we put like 1, now we get a 200 ok, we get our value back. And if we put no Z, that's fine, we get 200, Just a different calculation. So when we want to communicate one of these non standard responses, not everything's okay and here's your data, we're going to create a FastAPI response, set its status code, potentially set its message. Now, one of the things you might want to do, might want to consider here I'm not going to do another simple example, Maybe we'll do it later, is if you look at this over here, get our Z equals zero back, so what came back is 400 but the type it came back is plain text, whereas the normal response like this, this comes back as JSON. Let me just do it up here so you can see it actually in the network tools right? This is coming back as content application/json. So what you might consider doing is creating a dictionary in here like this, did I say I wasn't gonna do this? Let's just do this like that. It's a string, not not some JSON. And then we come over here and we could say media type equals application/ json, like so, and put the comments in the right place. There we go. And that went a little wonky, didn't it? Okay, weird indentation got in there, but let's go try this again. Here we got back this application is JSON. Everything was okay, but if we got an error, notice the data we get back is still JSON. This, we could look at that. The response is still JSON. So that way the clients assume it can always just get the document and look at what they got back. It's not like sometimes it's text, sometimes it's JSON. I don't know, I think that's kind of nicer. It's up to you guys whether or not you want to follow up on that and do this. But by default FastAPI returns errors that are not controlled by you as JSON. Like, remember, if we click here, we get a 404, but the 404 is actually a JSON Document. Plus the 404. So maybe it makes sense for us to factor that into our error messages as well, and pass over this application JSON.

|

|

|

transcript

|

1:28 |

We saw we could create a response and return it with some content, that was JSON, with a status code, however FastAPI actually has some shortcut ways to improve upon this. So if we could go over here and say "fastapi.responses", when you look in here, notice we have a JSON response, a file response, an HTML response, plain text, redirect, etcetera, etcetera, etcetera. So that's cool. Let's go into the JSON one which is up here. And how is this different? Well, it's gonna automatically set this to the media types, so we don't have to specify that. And also, the content is going to be set to be not a string which would parse this JSON, but some kind of dictionary. Just a straight dictionary there. And then the status code, well, we're still gonna need to set that, because that's kind of error condition, like that. Okay, so let's wrap that so you all can read it. They should do exactly the same thing as we had before. We go over here and we refresh, everything is good. But now if we make it error out, notice again we get some JSON passed back here and if we look at the type, it's application JSON. So same basic idea but we just have to write a little less code. We don't have to set the content type and all those things. Also, if this content you wanted to pass back had come from some other location or included other data, you'd have to use the JSON Library to turn into a string, which should be a hassle, So this is much better. If you're gonna return a JSON response, do it like this.

|

|

|

transcript

|

1:22 |

If you've received some input, some data from the user that you just can't process because it's not in the right format or something's missing, some of that is actually handled already by FastAPI. So for example, if X is passed over and the X is a string that can be converted to an integer, FastAPI will just handle that. It will return a JSON response with a dictionary that has some details about the error message. But there's other times where just certain values are required. Like, for example, you have to have a non-zero Z if Z is specified in this calculation because you'll get a divide by zero. So in this case, we got Z from the user, and it's zero, so we need to tell them "no, no, no, this is bad". If we just try to do the division, it's going to crash our server, they'll get a 500 response, still think our service is unreliable when really they sent us bad data, so we want to communicate that back. We're going to create some kind of error dictionary not shown here that we're gonna return like error is the message, you know, Z has to be non-zero for division and so on. We're going to do that as a FastAPI JSON response. We're going to set the status code to 400 or whatever status code you think is the best fit and then the content to a dictionary, which will be converted to JSON, and sent back along with the status code, and that's it. Super easy to return errors in this controlled way back to the clients.

|

|

|

transcript

|

2:20 |

There is one more thing I think we should check out and fix before we move on from this simple calculator API. Recall when we run it, we can go over and we can go toe whatever this URL port is here, "/api/calculate" and pass X and Y and that works great. But if we just go to the server, something bad happens. We get a "404 not found" right? See this? And if we actually pulled up the dev tools, you would see the response code, it's 404 not found. That is not ideal for just the home page of the domain name of your API. So let's take the smallest measures here, and we'll take bigger measures later to improve upon this. So let's just do a little bit of tiny work here so that we can have something. So let's go API, and we're gonna do a get to forward slash. Whatever we write next is what's gonna happen when you just request the server by itself. So I'm gonna create something called "index" and I'm gonna just paste some text here. I'm gonna have a body which has some HTML, the body, then the head, and then a div, and maybe it even needs a "<HTML> </HTML>" to be proper, even though it would have worked before. And we can just use what we knew before, we can just return a "fastapi.responses.htmlresponse()" and we want to set the content is this body. Alright, that's it. And with we had a little continuation character there. Now, now that's it. Nothing else got messed up. Okay, good. So if we just rerun this and we click here now, Yes! Welcome to the super fancy API. And now if we click on that, it takes us over to our actual API. Because it just seemed wrong that you opened up the server, and it just says "crash, this is not working". So here's a little tiny bit of work that we can do, stuff we've already discussed of just passing back some alternative response besides JSON and, you know, hooking this. Actually, this is the gateway to having a whole bunch of pages and stuff on our site if we wanted, we're going to talk more about using things like Jinja templates, and maybe even better templates, later. But for now, this will at least let our site work in a way so it doesn't look broken, and then we can go on and work with our API here. So that's a quick introduction to FastAPI. You can see it's super clean, super easy, and there's tons of neat stuff that we haven't even gotten to yet.

|

|

|

|

36:45 |

|

|

transcript

|

3:06 |

Let's just take a moment to reflect on the title of this class. Modern API's with FastAPI. Well, what is this "modern" about? Is it just because FastAPI is new? No, it's because FastAPI embraces many of the latest Python language features and external libraries, leveraging those features, to make it easier and less error prone to write API's and do that quickly, so fast. In this chapter, what we're gonna look at are these language foundations. So in particular, what are we gonna cover? We're gonna first start by talking about type hints or type annotations. We already saw that if we specified a type which wasn't an API method to be an integer that meant that one, it was required, and two, if a value was passed, it would be automatically converted over to an integer. So it's not just that the type hints provide a clue about what type we expect, FastAPI uses those to actually convert the types themselves. So we're going to talk a little bit about this idea of type hints. One of the super cool things about FastAPI is it supports the modern async and await programming model that have made things like Node.js and Go so incredibly fast. And, if you go look at the documentation, the FastAPI folks claim that they're about as fast as Node.js and Go. Do you know why? Because they're leveraging the same underlying feature. So we're gonna look at the core idea behind async and await. Later, we're gonna actually apply that to some of our view methods in our internal libraries we're using, which would be cool. But here, we're gonna focus on just getting that idea down nice and clear. We're gonna talk about ASGI servers; Asynchronous Server Gateway Interface's. Normally, when we work with Python Web apps like Flask and Django, they're working with what's called a WSGI or "wizgy" servers. This is an older model, or older gateway interface I guess, that doesn't allow for things like async and await programming, so it's a big limitation towards that modern, scalable stuff. That said, to use FastAPI, we've got to use, to really leverage it, we've got to use one of these more modern web servers. So we're going to talk just super briefly about that. You may have heard of data classes. And there's another set of classes, another library called "pydantic", which has similar structure as data classes and actually integrates with them in some ways, but there's a lot of the same types of validation we've already spoken around about for FastAPI. It does that for just General data Exchange. So JSON data, dictionary data and so on, and FastAPI is built upon these pydantic classes, so we're going to see how we work with them for defining our schemas, for validating what comes in, for converting the types, all that kind of stuff. And we'll see that the things we've already mentioned above, especially type hints, will provide automatic rich editor support. FastAPI has even its own PyCharm plugin. I think maybe it's just the pydantic, one of those two, pydantic or FastAPI has its own PyCharm plug in, and because they put type hints all over the libraries, the editors that can leverage type hints to give you more help will do so, and that includes PyCharm and VS Code.

|

|

|

transcript

|

3:34 |

Now we have a little bit of code to start from on this one, and that's just because there's a bunch of things that's not worth you watching me type, but we're just going to extend it with this idea of type hints. So over here have a program called "No Types Program". That means it has no type hints, no type information. None of that. And let's look at what it does. It's really simple. It has this running maximum of, basically it lets you order things off of a menu, like breakfast or dinner or whatever, and then it will figure out how much does that cost? And it'll actually keep track of what is your most expensive meal that you've had this, today. So this is the program that, given a set of things from the menu, is supposed to, does not yet, it's supposed to add up what all the items are. And then down here, we're gonna create some items, like we've got this item class, item named tuple that can have a name and a price, or name and a value, something like that. And then we're gonna create a couple of lists, and we're gonna have it to add up that, add up the dinner item, add up the breakfast items, and we'll see what the most expensive one was. It's fairly contrived, but I just want to show you some of the pieces here. So first of all, let's look at this counter. When I just look at it, the fact that this is plural maybe tells me potentially I could like loop over it. I know I'm supposed to add up the prices. We'll do "for i in itmes" do something. What do I get? What are these items? Now something a little bit funky is gonna happen. I think PyCharm was going to tell me what I can do if I hit "i.", what is available here? Check that out. It says it has a value and it has a name, and these come from this item. Well how the heck did it know that? Normally it wouldn't, but because it sees us creating only item lists and passing them over there, it's like "ah we're pretty sure this is gonna work". But if we happen to have not been using it, do this. Now, I come over here and say "i." what do I get? Nothing. Absolutely nothing. This is all entirely useless and not relevant. So how do I know what I can do? How do I know I can loop over these things? How do I know that I can work with it? If the program can't figure out what you're passing through the entire flow or this is a library that you've built and you hand over to somebody, it's not being used yet, how would the editors be able to help you at all? And how would they be certain that that's right. Similarly, over here, we've got this max value, running max, we want to do some kind of test. I mean, it probably looks like this. "If total is greater than running max, running max equals total", however, this is gonna crash. Why? Because you can't compare a number against none, so I'll just say, "if not running max, or" like this. Okay. So what is this running max supposed to be? Should we really set it to, is this an integer? Is it a float? I don't know. What we're gonna do is I'm gonna go ahead and just make this work here, and then we're gonna improve it by using type annotations. So let's go and put this back. We could go up and look at our definition. Oh, yeah, We have a value here. Also be "total += i.value" I should add up the prices. There's our max. And then if we go and run it, alright, give it a shot. Oh, look at that. It works great. Let's create some items. Dinner was $38. Breakfast was $23. Our most expensive meal was $38. So this is great. The program is fine. There's nothing wrong with the program, but looking at it and knowing what to do, well, didn't give us much help. And if I get it wrong, does it give me any help there? Nope. It doesn't say" there is no other", It just says "Fine, that'll probably work. We really don't know what we're doing, we can't help you". It would be nice if the editors and other tools said, "no, no, no, there's no other, this is not gonna work. I don't know what you're thinking, but it's not gonna work", right? So we can add type hits to this program to fix all of these issues.

|

|

|

transcript

|

2:41 |

Alright. Now, let's just make a copy. So we have this old version without any type hints, and I'll have one with actual hints, types. So let's just set that to run. See, it still works, but what I want to do is add some extra information. I would like to say that this can be an integer except for, hold on, PyCharm now says there's a problem. You said it was an integer like 1 or 7 or -3, that's not what none is. None cannot be interacted with as an integer, right? So when we have a type that is either none or something, we have to say this is in optional of that thing. An optional is not built into the language. Some languages have really cool syntax like that to mean it could be none, but we don't have that. So we have to import this from typing, okay? So now it lets us either have it as an integer or none, right? So now we have described this as either being an integer or being just none. Down here, let's go down, it's going to know that this could be an integer because it's set to zero. Probably don't need to do that. But remember, we saw this "i." We got some help, but only because we were calling it. Take that away for a second. And now if we say "i." again, no help. So the next thing to do is say what goes here. So what we can do is say this is an iterable. Now again, this doesn't come up just like optional. We've gotta import that from typing, and that tells us that we can put it into a loop, but it still doesn't tell you what it is, so we can say it is an iterable of this item, named tuple, if we say that, check this out. Here's our value that we're looking for. Perfect. And if we had other, all of sudden no, no, no, you can't have other. There is no other. There's item and there's name. Sorry, there's name and there's value. Again, all of this works. We might as well go over here and say this is going to return, I think if we look at the items we create, no, no, these are integers. So we can just say this returns and int, alright? Perfect. Perfect. And now if we call it down here, nothing. Nothing should be changed. But you can notice that if we were trying to pass in, like here, 7, It'll give you a warning like, "hey, 7 is not iterable, we expected something you could loop over, not a 7". Alright, so let's just run it one more time. Make sure it still works. It does. But now, not only does it work, it works better with the editors because of this kind of stuff and the validation for verifying what we're passing in and what we're getting back. Perfect. So this is the idea of type hints. It's super valuable in general, but it is extra useful in FastAPI because not only does it tell you what the program does, FastAPI actually takes that and uses it for things like type conversion and whatnot.

|

|

|

transcript

|

1:17 |

Now that you saw how useful type annotations can be, or type hits, let's just review the concept of them really quick. So here we have a function with no type information. We have some running max. It's set to nothing in the beginning. What's it supposed to be set to? I don't know some larger thing, that could be the largest order, that could be the value of the largest order, who knows, right? There's no information communicated there. Also, here's a counter function. It takes something. Based on the name being plural, I'm guessing I could loop over those things, but I'm not 100% sure. And then there is a total, probably know what to do with that, right? So this is not super helpful. Adding types lights up a lot of insight into how it's meant to be used. So now we have a optional int. So it starts out as nothing, but it's supposed to be set to an integer. And here we have a counter. It takes terrible things so I could loop over it. And what's in them? It's an item. Because it's an item, the editors know that it has a value and a name and it says "we return an integer". So just reading this code without doing anything else or seeing how it's used, we know much more about what's possible as well as our editors know what we should be able to do. So if we try to, add a, you know, some kind of field lookup or something that doesn't exist, while we're looping over the items, it will be able to help us and say, "No, you're doing that wrong. Its name or value, those are your only two choices". Cool, right?

|

|

|

transcript

|

2:30 |

Async and await is definitely one of the most exciting features added to Python in the last couple of years and FastAPI makes it really easy to use. It handles actually most of the juggling of all the asynchronous stuff, you just have to make your code asynchronous for it to be able to work with it. So let me paste the program in here, and it's gonna have two versions, a synchronous and an asynchronous version. So we're going to start with the synchronous version. Now notice it has a couple dependencies, those are all listed over here. So we're just gonna go and go into async, sync version, we're just going to run its requirements. So it's using the usual characters here. It's using beautiful soup to work with HTML, and it's using requests to make some kind of requests. So what is this thing going to do, anyway? It's going to go out to "talkpython.fm", pull up the page associated, the HTML page with that episode, it's going to download the HTML and use beautiful soup to get the header and use that to grab the title, okay? The way it works, just like you'd expect, it just goes one at a time, goes from 270, 271, 272, and so on and it says, give me the HTML for that one, process it, print it. So, easy right? Let's go do that. Notice there it goes, one, and then the next, and then the next. The Talk Python server is super fast, so it only takes five seconds to do that for 10 requests. Run it a few times just to see where it lands. Another five seconds, pretty stable. Even under five seconds, how fast. But here, let me ask you this question: Where are we spending our time? Where are you waiting for this response? I can tell you the server response time for these pages is like 50 milliseconds. But the ping time from here to the server is at least 100. So we're not even just waiting on the server, we're mostly just waiting on the vague Internet, right? Like the request making its way all the way over to the east coast of the US From the west coast, where I am. Could we do more of that? The Internet's really scalable. It would be great if we could send all these requests out at once and then just get them back as they get done. So what we're gonna do is we're gonna convert this from running in this traditional synchronous way to using the new async and await language features and the libraries that we'll actually make use of later as well.

|

|

|

transcript

|

4:08 |

Well, we've seen the traditional synchronous style with requests. How's this look if we bring in something new and fancy? And the big fancy new thing we're gonna bring in is "httpx". So this is much like requests in the way you work with it, but it actually supports async and await. So let's go over here and just see how we're using it. First of all, one thing that's pretty interesting is if we wrote code like, this was a synchronous, but it looked just exactly the same as before, you would see it's not so interesting. If I come over here and I run the old one, let's just run this real quick, you can see, it's doing exactly the same thing. It's getting an item, give you an answer, get the next item, giving the next answer, taking five seconds. But the reason is we're starting off one of these calls, and we're waiting for it. So our first thing we've got to realize is what we need to do is kick off a bunch of these asynchronous tasks here, right? So when you go to get the titles, we're gonna start all the tasks, so we're gonna go to this thing called an "asyncio event loop", you don't need to worry about this in FastAPI but when you work with it directly, you do. We're gonna create a task from some asynchronous function, right? This is stuff that FastAPI does for us. We're going to start them off and then we're gonna start them all going, and then we're gonna wait for them to finish and just process them in the order we started them. But, you know, as they all basically are working in parallel. So let's go see this "get_HTML" function. Instead of being a regular function, like this, it's an async function with the word async out front, which means we have the ability to use async and await within it. So down here we have this, not a regular with block, but a async with block from working with this httpx client. And then anytime we call something asynchronous, like if we do this get here, what we're gonna get back is not the answer, not the response, but a task or a co-routine that, when finished, would give us the response. And the way to say make the program give up its execution to anything else that needs to run while we're waiting and then give me the answer when it's done, is that right there. We just say "await this asynchronous call" and then it's up to the program to figure out, well, while we're waiting on this, maybe we could start a bunch of other calls. Maybe we could be processing the responses from other clients as those API calls get done or those http calls get done. So this basically tells the runtime, tells Python, look, "I'm waiting on this stuff. Pick me up when it's done", but in the meantime, you can go and run other code without even using threads. So this is really the magic line right there. And then we check to make sure it's ok, and we return the text. Remember, it was going "click, click, click, request, request, request, response, response" when we ran it before about every five seconds. How does it look now? They all start, they're all done. Just like that. It was five seconds before. Now it's less than one second. Let's run it a few times. They all start, They're all done. Isn't that awesome? Yeah, it puts a little bit more load on the server, but mostly we're just doing all the work while we're waiting, you know, waiting on this stuff to make it through the Internet. Right? So this is really, really cool. It is so much faster, right? It's six times faster. Well not that time. Right, there we go. Six times faster or so this time around, and all we gotta to do is write these async functions. When we had a regular call that happened to be async, we just put await in front, and now it kind of goes back to the way it was before, and that's it. I mean, let's just compare real quick. We're not using a client session on requests, so it's slightly different. But we have this, seeing a callback URL raise for status and return text. And now we have, oh wait. Call URL, raise for status, return the text. It just happens to be we're using this async client to kind of put that all together. Okay, cool right? So this is the async foundation, and you can just see it's so much faster, so much nicer, handling these requests. And this is the same way that our Web app is gonna work instead of being able to handle just one request at a time it could do 10, 20, 100 requests concurrently while maybe it's working with other API's, talking to databases, all those things. And working with async and await is what's required to unlock that potential.

|

|

|

transcript

|

2:25 |

We saw how powerful asynchronous methods can be. Let's just take a moment and review some of the core ideas around building async methods. Normally, we define a method like "get HTML" as "def get_HTML" but if you want it to be asynchronous, you say "async def get_HTML" right. We're gonna be doing that within FastAPI all over the place. And then we have this somewhat unusual construct called an "async with block" and that's probably the most unusual thing in async amd await. And what we're going to do there is the actual with Block is opening a network connection, opening a socket, and so we want to do that asynchronously. It actually takes a little bit of time if you just create these separately without the async stuff, it's quite a bit slower. So this lets us open all the sockets at the same time, and then we're gonna issue some of these requests. We're gonna say "client.get". Now normally, that's just a blocking call and you get a value back. But when you call an asynchronous method, which client.get is, what you get back is not the return value. It didn't even actually run. What you get back is something called a co-routine that if you await it, it will run and give you the value. So here, we're going to say, "await client.get", get the response, and then we just work with it normal. The big takeaway here is that where you see "async with", where you see the word "await", all of those points, if you're waiting on some external system, your program, in this case, the web scraper, more generally, probably the API server, will be able to actually handle other requests and do other work until this client gets back to us with its answer, then we pick it up and run with it. Often, people think of this async await as scaling the computation or like adding threads. Threads are not even really involved. What it lets you do is it says it lets you mark different places in your function that says "I'm waiting on something other than our program. I'm waiting on something external, you can go to other work until that gets back to us" right? So here we're waiting on the talkpython.fm server, could be a database, it could be something else. So it's this async with and await where we're marking, like, "here you can go to other work until I get this response and then please start me up and we'll keep running". And that's how async and await works, and because most Web applications are waiting on something else, they're talking to databases, waiting on them, they're talking to other API's, micro services, and so on, waiting on them, you can do a whole lot more work if you can find a way for your Web server to allow it to work on other stuff while it's waiting, and that's exactly what async and await does for us.

|

|

|

transcript

|

5:06 |