|

|

|

28:27 |

|

|

transcript

|

6:20 |

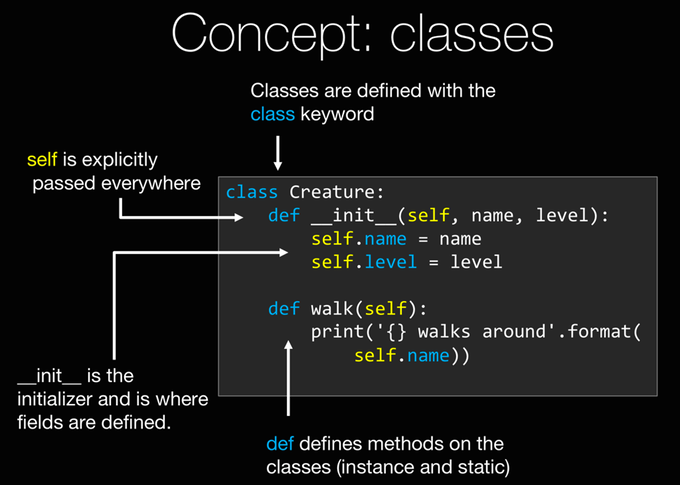

Hello and welcome to my course Python Jumpstart By Building Ten Applications. Whether you are entirely new to Python, or you have had some experience with it, but you would like to understand the language better, I think you'll have a lot of fun building these ten applications with me and learning the core language concepts along the way. I've carefully chosen these ten applications to make them small enough that you can focus on the essential language constructs while you are building the application, and there won't be too much extra stuff to distract you, but hopefully they are big enough and complex enough that they are interesting to you, and you'll see we start out quite simple, but as we build up they get more and more complex, more and more realistic, I would say. Welcome to the course, we are going to have a lot of fun! Let's talk about what we are going to build. We are going to start at "Hello World", and this in not just to test that you can write "print('Hello world!')", that would be kind of silly, we actually do just a little more than that, but we are also going to make the focus of this app to make sure that your setup is right, that you've got Python installed, that you are using the right version, it's in your path, all the various things you need to make sure your environment is working, your editor configured correctly, all those kind of things. Then, we are going to get to our first what you might think of as real app. Guess that number game, and the computer is going to randomly choose a number and it's going to ask the user, "hey, what number do you think I guessed" and it will sort of say, "too high/ too low," you may know this game. So that will let us work with things like user input, converting strings to numbers, conditional statements, booleans, all those foundational language constructs. Then, the next one we are going to focus on is a birthday countdown application, and this will let us really explore dates, time, differences between dates, the various things like that. Once you have finished with this app you will be very comfortable working with dates in the Python language, and the idea here is the user is going to enter their birthday, we'll then use that information to figure out how many days their birthday is in the future of that year, or how many days it's already been since it's gone by. The fourth app we are going to build is a personal journal or diary. This app is fairly complex, we are going to look at a lot of really useful and central features to the language. We are going to focus mostly on file I/O, but also partitioning our app into many different files and reusing those files to build up a more complex application. We are going to dig into functions, and a lot more. So, these first four apps will just run on our computer, and that's great, but we live in a connected world, so this fifth application is going to be a weather client. We are going to actually go out to the Internet and get the weather wherever you happen to be located. You will enter your zip code and out will pop a weather report, a real, live weather report that is up to date to the minute. Now we are going to use a lot of cool language features and packages to build this one, so we are going to focus specifically on how you go to the Python package index often referred to as PyPi, and grab these packages, install them locally and use them to construct something that will let us get our weather report. We are going to do some HTTP request, we are going to do some screen scraping, HTML parsing, things like that. At this point, we are going to need a little laugh. We've built these five apps and we've worked with the Internet, but we've only gotten text back, so this LOL Cat Factory is going to let us dig into the concept of binary files, so we'll combine the fact that we are going to go to the Internet, grab some binary data, in this case very important LOL Cat pictures, save those to binary files on the disk, and then explore them with Python. Have you ever played DnD or some of these types of games? Well, it's time for a wizard battle, so we are going to make a game where we have things like wizards, and dragons and so on, and this is kind of a text roll playing game, we'll create a wizard and the wizard will be part of this ecosystem where he battles different creatures and so on. Now, for this, we are going to really dig into the concept of classes, object oriented programming, inheritance, doc typing, all of the OO type building blocks that really make Python a powerful language. Number eight, we are going to build this file searcher so you can enter some text, and it will go through your system, your hard drive, look at all the different files, pull out the various pieces of text and give you little report on it. Number nine, we are going to build a real estate investor application. We are going to start with CSV file full of real estate transactions data, historical data for particular location, and then we are going to write an application that will let you answer questions about it, what is the average price of a house that has two bedrooms sold in this location, what is the most valuable house ever sold that had one bathroom and one bedroom. Those types of things. So we are going to work with this file format CSV which most of you should be very familiar with, but the idea will be easily translatable to other formats as well of course, and we are going to really focus on two Pythonic concepts here, two language features in the Python language that are fairly unique to Python, that is generator expressions and list comprehensions. Either way is to do almost database like query work in your procedural code, so it's super powerful, super interesting for answering the types of questions this app might ask. Last but not least, we are going to have a movie lookup app. So, in this application, it's going to go out to the Internet again, and give you information about movies, but the thing we are actually going to focus on is not so much the Internet part, we did that in five and six already, we are going to focus on errors, exceptions, error handling, things like that. What if you can't get to the Internet, what if the site is down, what if it returns data you don't expect, how do you write an application that fails gracefully or maybe it doesn't fail at all? So, that's what we are going to focus on, these are our ten applications. If you are brand new to Python I recommend you go in order. If you kind of know what you are doing, if you look at the second one and go, "I know all these things," feel free to skip around. But, I would encourage you to go and look through the list of the core concepts, each chapter, each application has a set of core concepts that are list out, and if you don't know those, be sure to go back and look at that first, because they kind of build on each other, even though they are somewhat independent.

|

|

|

transcript

|

3:22 |

What fun would it be to build these applications if you don't build them yourself? So, it's really important that you guys try to build these apps yourself. There are two ways you can do it, you can either, go to each chapter and see the description of the app, and then try to build it, then watch the lesson, that is maybe an advanced scenario, more likely you watch me do it, we talk about it and then, you put the video aside, you go and you start working on yourself. Either way, you are going to need a Git hold of the source code. So the source code is in a public Git repository, it's github.com/mikeckennedy/Python-jumpstart-course-demos. So let's go over there and have a look. Here is the repository, you can just simply download it as a zip, or if you are into Git like me, feel free to clone it, you can install GitHub desktop and clone it that way, you can clone it from the command line copying this here. So the most important part you'll find are the apps. So if you go into the apps you will see there is 0, 1, 2, 3 and in the setup one, we just have some links, stuff to help you get setup, those will be in the next video, but for each of the other ones, let's say... let's jump down here to journal. You can see for each particular app, there is a... you_try and a final. The final is exactly what I created in the video, so in the video, I actually created these three files and here is the exact code that I wrote for this particular application, ok? Not surprisingly, when it's time for you to try to build the app, you go to you_try. Now, for almost all the apps there is nothing to really get started with, you can just create a file, a new Python file, and get started, it doesn't matter where you put it, but I put the instructions in here for a few of the apps there will be resources you need to get started, for example that real estate app, the data files you need are going to be there to get started with. But, most of the time, I have something like this, and it will give you a picture of what we are going to build, so here is our birthday app... we are going to write an app that has this output, and you can see it asks three questions to construct the date, what year, day and month were you born, and then it does its work and it answers the question... well it looks like you were born on this day, and it looks like your birthday is in so many days from now. Hope you are looking forward to it. Down below, you can see that there is always the key concepts that you need to know, so for example, in this one, we talk about functions, so here is kind of the basics of functions, and we talk about "datetimes", so it shows you the various pieces of "datetimes" that you might need to use. If we look at another one, like, let's look at number 2... you can see this one talked about string formatting, conditionals, loops, importing modules, and there is just a little example here, this is not instructions that you follow, but these are sort of the key concepts that you need to know, so if you want to try it before we talk about it, you have at least a chance, right?, but for the most part I expect you to have watched the video and then you try it yourself. So that's the story the source code, the apps, the instructions, how you follow along. So, LET GET STARTED!!!!! It doesn't matter what you want to build, I am sure you are going to build something amazing with Python, and the time to get stated on it, and learning, and building, is right now. Let's move on to the next set of videos where you will learn about setting up your environment and then it will be on to the applications. Once again, welcome to the class, have a great time.

|

|

|

transcript

|

5:09 |

Before we get to the actual apps that we are going to build, let's talk a little bit about the tools we are going to use, how you use them up, and even just a little bit of history. The first question that you get a lot of times when you start talking about Python, Python apps, Python course, is... what version of Python is it going to run on? It turns out this is kind of a big question, so let's talk about that first. To appreciate this, let's look at the history of Python. In the beginning, it came into existence as a concept in 1989, it was released in 1991, had a couple of major releases, version 2 came out in 2003, today it's 2016. So, development started, version 1 was released, version 2 was released, and then version 3 actually was a major breaking change to the Python code. The developers look back over the 17 years of experience and said, what was good about Python and what things kind of gave people trouble, what would be a nice way to clean up the language, so that it's solid going forward, and they made these breaking changes to Python 3 and expected people to move along pretty quickly. Well, it's today, sometime in 2016 when this was recorded, and there is still a debate, should people be using Python 2 or should they be using Python 3, there is a lot of large code bases still running on Python 2, the majority of large commercial applications are probably using Python 2, even though it's been 8 years Python 3 has been out. However, there is something on horizon coming in 2020, they are going to make this a much simpler choice between the two languages. First of all, the changes between Python 3 and Python 2 are not that major, if you write code in Python 3 chances are, unless it's one of the advanced features "asyncio" or something like this, chances are it's going to work fine in Python 2, but there are older ways in Python 2 that are not forward compatible, and I think that's actually the bigger problem. So, if you learn Python 3 I feel like you have more well rounded ability to jump into either type of project, but that said, in 2020, just 4 short years from now, Python 2 is going to be end of life. No more bug fixes, not sure about security fixes, but definitely no more support, and to drive that point home, at PyCon, Guido Van Rossum, the guy who invented Python, started out his keynote with this saying... "there will not be an extension to this policy", this is it, only 4 years away before this being an absolutely clear choice, Python 3 is the future, and that's why this course is designed with Python 3 in mind. So, in order to do this course, you are going to need to install Python 3. And, we are going to talk about how to do that for each of the various operating systems. One question, is maybe you already have Python 3, maybe there is nothing to do, so just knowing where Python is available, that's kind of good background knowledge. Before we get into details to set it up each on these operating systems, let's answer the question- If I have Windows, do I have Python? No, you have no version of Python, Windows does not ship with Python. Unfortunately, it just doesn't. So, if you have Windows, you have users who are using Windows, you need to get them Python somehow, right? On Linux, chances are at least on Ubuntu, you have both versions of Python, that's pretty awesome, maybe it's not the absolute latest, maybe it's 3.4 and 3.5, but still, pretty darn good. OS X is somewhere in the middle, typically you have Python 2, but you don't have Python 3. A little like the choice between Python 2 and Python 3 is somewhat contentious in the Python community, so as the editor you choose. On one end of the spectrum you have people that use VI and Vim and Emacs, and these very light weight sort of shell friendly, ssh friendly editors, and that's actually a very large percentage of the professional programmer's population for Python devs, and on the other end, you have IDEs rich, full featured, large applications that bring all of your development tools together. Sort of the premiere one of those, is the one I have here, and the one I am recommending for the course is PyCharm, but we'll see a few other options as well. So, in editors like PyCharm you can edit of course and run your code, you can run unit tests, code coverage, look, you have a graphical visualizations of your git history and commit changes, there is all sorts of stuff you can do here. So I recommend to use PyCharm, when we get to the particular operating systems we'll talk more about that. But if for some reason you don't want to use PyCharm, there are other options. PyCharm has a free option for the community edition, but if you are on Windows, you can also use Visual Studio and then plug in the Python tools for Visual Studio, that's a nice free option, Microsoft has the community edition of Visual Studio and then the Python tools are open source and free, so that's a really good choice actually, just a bare notch under PyCharm, but really good, if you are into the IDEs, if you are not into the IDEs, choose something like Sublime text or Atom by GitHub.

|

|

|

transcript

|

4:18 |

All right, let's talk about how to install the various tools in Python on your operating system. Now, there are going to be three of these videos, there is going to be one for OS X, one for Windows and one for Linux. You probably only need to look at one. Just choose the operating system that you are currently working on, and watch that video, you don't need to watch the Linux one if you are on Windows for example, unless you just want to sort of see what the experiences are like across the different platforms. So, with that said, here is the OS X one, and the others will follow. So, there is only two tools, two resources you need to take this class outside the source code on the GitHub repository, one is you are going to need Python 3, remember, Python 3 does not come on OS X, Python 2.7 does, but Python 3 does not so you've got to install that. As well as PyCharm. So let's go look at these. I pulled up the websites that we are going to be working with, Python.org, this is where we get Python 3, PyCharm over here on jetbrains.com, we are going to download PyCharm here and I also pulled up the other three options, Sublime Text if you are interested in that, Atom, you've got to watch this video it's very funny, a great little light weight editor. We'll come over here, download this, quick, just it defaults you the latest of both Python 2 and Python 3 for your operating system, so you pick this, download, save, I've already done that. So let's go over here and see, if I type Python you will get something, but you'll see that Python 2.7.10 comes up, if I type Python 3, there is no Python 3, so let's install Python 3 and make sure everything setup good there. So, this is what I got of Python.org, just click through, agree to, whatever it's going to make you agree to... ok, so Python is installed. Let's just try a little trick again, we can even do a --version on the end, excellent, so we have Python 3 installed and it's the latest version. So, that's a good start. Next, PytCharm... when you just click download, it gives us a choice between what version do you want, the professional or the community edition, this is up to you, I love this tool, I paid money for it, I am getting the professional, the community is free, if you are wondering what the differences are, just come back here to the main PyCharm page and you can see, it will show you that actually the Python features themselves, there is not too much of a difference, but the web development, and Python web frameworks, and data base stuff, that is only in the professional edition. But, lucky for you, none of that is actually happening in this class so you can pick either of these that you wish. Once you have it downloaded, you will have "dmg"... disk image here, I love their little installer, here is the app, just drag it over here, wait a moment, and you should have PyCharm installed. Now, that's finished installing, let's check that, and we can just run PyCharm. First time it will warn you this came of the Internet, beware, yeah, we did that on purpose. Make sure you get it from right place. And, here is PyCharm, I've already run it before, but the first time you run it, it'll ask you for the settings, I like mine in this dark theme, so everywhere it ask you about colors, there is two places, you can say Dracula if you want the same theme as me, or pick another one. The other two editors are just Atom, here is Atom, nice and clean, and Sublime text, again, super small, super clean. Let me show you a technique that will be helpful for opening these projects in basically working with Python projects, in general. So, here I have Request Masters, I got this off of GitHub, this is the Request package and this is actually the source code, so here you can see, here is all the Python files, just like the project base, if I want to open this in PyCharm, I just drop it on here, this is OS X only feature, but if I drop it like this it will open the whole project, and see here is all the code that we need. You do a similar thing for Sublime text and you can do a similar thing for Atom. So, here is all the packages, same thing. So, that's a really helpful tip, if you are jumping from project to project and you want to just open up this project, open up that after project, open up before project, and so on, I am sure you will find that useful throughout the class. All right, that's it, this OS X system is ready to roll, ready to work on this class. Hope you are ready to go build app number 1.

|

|

|

transcript

|

4:11 |

Hello my Windows friends! Let's get you all setup and ready to work on this class and build those ten applications. And I have good news for you, until very recently, using Python on Windows has been actually fairly painful to get it setup and everything configured right, but with Python 3.5 the installer and the setup process is way better. So let's get to it. To get started you are going to need two resources on Windows, you are going to need to install Python 3, which you can get at Python.org, and you are going to need to install PyCharm at jetbrains.com/pycharm. Let's go over to Windows 10. Here is a brand new, completely fresh install of Windows 10 I just got form Microsoft, and I've opened up the various web pages we are going to be working with. First thing we have to do is install Python, and as I told you, there is no Python on Windows, if I open this up and I type Python, there is no Python. So, we have to download Python, and we want 3.5 1 or whatever the latest version of 3 is and I've already actually downloaded it so I won't click here, but you just click that, that's super easy. The other thing we need to download is PyCharm, so here is PyCharm, it actually comes in two editions, the professional edition, or the community edition. You can pick either for this class, the community edition is totally fine, the things you'll be missing are... you'll basically be missing on web development and database management features from the professional edition, and the community for straight pure Python has the same features as professional. If for some reason you don't want to use PyCharm, you want something more light weight, you can use Atom, at atom.io, Atom is from GitHub it's pretty cool, I really like this editor, you can see there is a little video here, I recommend you watch it, it's pretty hilarious. Sublime Text is also a super popular light weight editor, and I told you about Visual Studio, so you can get Visual Studio community edition this is now a free, full-fledged version of Visual Studio, and you can get Python tools for Visual Studio, plug this together, and you are doing pretty good. But, we are going to be using PyCharm in the class so that's what I will setup here. Let's start by installing Python. So I've got it downloaded, and I'm going to run it, now it has a couple of options in the installer, let's say if you are going to try to just type Python from the command line or other tools like "pip" for installing packages, you will probably want to add this to your path. And let's customize installation just to see what we get, we get documentation, "pip" which manages packages, we'll talk about that in our apps, and we have the test suite and "py launcher" is really nice and we don't need to install it for all the users. Let's go ahead and precompile standard library this will give us a little better perf, I really don't like this big long folder here so this app data folder is hidden in Windows so it's kind of hard to discover where these are so I am just going to put a Python folder directly in my user profile and then, in case you want to have 64 bit or 32 bit version of Python or maybe different types 2.7, 3.5 you probably want to leave this specifier here. That seems like a good setup, let's go. All right, Python was set up successfully, let's close this and let's just find out, if I type python --version which we should see 3.5 1 and... SURVERY SAYS? SUCCESS!!! Ok, Python is working, last thing to set up is just PyCharm. So the installer is just a standard Windows installer, just sort of yes your way through, it's up to you whether you associate py files with it, typically, I don't do that, but it's your call. Ok, it looks like we successfully installed PyCharm, that was easy, let's go and run it. Brand new, nothing to import, now, normally I would log in with my JetBrains account, but for this purpose I'll just evaluate it, say ok, that's great. The first time you launch PyCharm it will ask you what theme and keyboard scheme you want to use, I'll say Visual Studio keyboard theme, and I like my code dark, I have the editors dark and the code text to be light, so I am going to pick the Dracula theme, pick which ever you like, and there you have it, PyCharm is up and ready to roll! this brand new version of Windows 10 is ready to build our 10 apps So without further ado... let's move on!

|

|

|

transcript

|

5:07 |

Hello my Linux friends! Let's talk about what you've got to setup on your machine to do this class, in the same way that I am, at least, you will see that you actually already have Python and Python 3 installed on Linux if you are using something like Ubuntu, so that's pretty awesome. I'll show you where to go to get it if you don't happen to have it and I'll show you how to install PyCharm. It works wonderfully on Linux but it's a little bit of a pain to set it up so I'll walk you through that. So, here we are over in Ubuntu 15, brand new fresh version I literally just downloaded, and we are on the PyCharm page. So we can go and download PyCharm, you will see there is actually two versions, there is a professional version and a community edition, we are going to download the professional edition, you can get a 30 day free trial and if you pay for it like I do, then obviously, you can have it forever. The main difference between the community and the professional edition, the community edition is always free, is... the community edition does a whole bunch of cool Python stuff but it doesn't do web or database work, the professional edition does, in addition to standard Python things, web frameworks, type script, database designer type things. So, for this course, you can totally go by with community but for a professional work, well, maybe professional is the thing to go with. Some of the other editors you might choose if for some reason you don't want to use PyCharm, is you could use Atom, it is a really great editor from GitHub, I really like it and the video is hilarious so check out the video, just for a laugh. Sublime text is very popular, and of course, you can use Emacs or Vim that a lot of people are using. As I said, Ubuntu comes with Python 2 and 3 but for some reason if you need to download it, just come over here, Python.org, grab the latest version it'll automatically find the right thing for your operating system, you could also install it with aptitude, you can do things like apt-get install Python3 -dev, there is a couple of packages that you can install. So first, let's verify that I actually do have Python installed, Python 3 so I can say Python3 --version, and we have 3.4.3+, which makes it even better than 3.4.3, awesome, and then we have PyCharm, we are going to go download it, it's kind of big, so I actually already downloaded it, go over to my downloads folder, and we have the tarball (tar.gz) right here. So we need to decompress this and copy it somewhere, so I come over here, right click and say extract here, and it will extract it out. Now it has the version name here, let's find, let's make it new location, let's put it in my home, I like to create a folder called bin in my home and then here I'll make a folder called pycharm and within pycharm I'll put pycharm-5.0.4. Now if you open it up you'll see there is nothing to run right away but there is a bin folder within there and what we want to do is we want to run this script, so I could double click it, and it will just open in gedit, not the best, so I am going to come over here and just drop it into my terminal and run it. Now, it turns out, there is a problem, PyCharm is built on the IntelliJ platform, IDE platform, and that platform is Java based, so we need to install Java, before we can carry on. So on Ubuntu, we'll just use apt-get so we'll say sudo apt-get install openjdk-8-jdk. And I'll put in my password, I'll wait for a moment, and says are you sure you want to do this, it might take a moment 171 MG's, it's fine, go Excellent, well, that took a minute, but now we have Java installed, let's try to run that again, PyCharm shell, now it's running, you can see it says do you want to import previous versions? well no, it's a brand new machine so no, not really; normally I would just log in with my JetBrains account but for now, I'll just evaluate it for free, which you guys should be able to do for this class. When PyCharm first opens, it asks us what keyboard map and visual theme we would like, I am going to leave the keyboard map alone but I like my code my IDEs and windows and stuff to be dark, not bright, so dark background light code, so I am going to pick the Dracula theme for both the code colors as well as IDE theme, and I will say ok, and it can't make the change unless you restart, so yeah, let's let it restart. Excellent, my PyCharm is running, it's nice and dark with its Dracula theme. Now the one other thing I'd like to do is notice it's over here, and I kind of like to not be running this shell script anymore straight from the terminal, so let's run it one more time, notice it's gone from the launcher. Now it's up and running, I can lock it to the launcher, and now this way, when it's gone, I want to launch it again, I can just come over here and launch it straight out of launcher. Congratulations, you have PyCharm working on Ubuntu, it's time to head on over and build your first app and have a great time doing it!

|

|

|

|

13:38 |

|

|

transcript

|

1:14 |

Hello and welcome to the first app you are going to build. It's a Hello World app. And we are naming it Hello (You Pythonic) World. So, what exactly are we going to build? Well, it's going to look like this. We are going to have a little Hello World app and it's going to have a header that says "Hello App" and it's going to ask a question "What is your name?" And then, the user will enter something like "Michael". And you can see here, this is a convention we use throughout the course that the yellow indicates user input and the white indicates application output. So the user enters "Michael", and the app will echo back, "Nice to meet you, Michel" Now, you may not think that there is a whole lot to learn from such a simple application, but in fact, there is really quite a bit going on here. We are going to use the Hello World app not just to show the minimum number of characters you have to enter into an editor to get Python to generate an application, but in fact, to verify our Python environment, and to get started with our editors, as well as we are extending it to do a little bit more than standard Hello World which is maybe console out, but we are talking about basic syntax, strings, accepting input from the users, variables, that kind of stuff. So you can actually learn quite a bit, even from this very simple Hello World application.

|

|

|

transcript

|

4:39 |

So let's switch over to my Mac and get started. So to use PyCharm, it's pretty simple, you open it up, and you just say create a new project. You can see there is a lot of different kinds of projects we can create, we are just going to do a pure Python app for our beginning one, but you could create a Django, Flask, or Pyramid web app, even a Google App Engine app, and there is also a lot of HTML, Javascript options because PyCharm is a full featured Javascript, CSS, HTML editor, in addition to being a great Python editor. So I actually already have a project I created just by basically saying ok, on the next screen. And when you create a new Python app, a pure Python app, you end up with basically a blank folder. So, we are going to come over here and add a new Python file, so right click and say new Python file, and throughout this class, the main program that you'll run, that indicates sort of the entry point into this set of scripts, or Python files that we are building, is going to be named program.py, so I am going to create something called program.py, I am using Git to store all the files and demos that I create for you during this class which I will make publicly available for you to look at and see how they have evolved over time and you can see that PyCharm is actually suggesting that we add this to our Git repository I was going to tell it to please don't ask again. Now, remember, the first thing that our little sample app had was it had a little header with some dashes and it said "Hello App", and it sort of was bracketed by kind of a dashed line above and below. So let's go back creating that. So we are going to use something called the print function. And this is typically the way that you output things to the console. We can say print, and then here we can put s a string. And in Python, you can create string with double quotes, like this " " or you can create strings with single quotes, like that ' ' and I actually prefer the single quotes, it's just a little less typing, you'll see there is some times you might want to use double quotes, you might want to use single quotes, we'll talk about that later. So, we are going to come over here and we are just going to have our dashes like so, and then we also want to output that little "Hello App" part, so we are going to come here and say print, go over and say hello app, I think pretty much like that and we are going to say print, I am just going to do this one more time. Let's go and run this and make sure there is some reasonable output, first thing to do is save this. Now I could go to the console, to the terminal and just run that, I can easily do that by saying copy path, we go down here and I can type Python 3 and I can just give it a path, add hit go, and then out comes our output, hello app, perfect. But, PyCharm let us run this, and debug it, and do all sorts of cool runtime analyses of our app, right from within PyCharm. And you see there is a little debug icon here and a little play icon here, but they are both grayed out, and that's because there is no run configuration setup. So instead of going over here, we are just going to right click and say run the program and it will create a run configuration and save that for us going forward. So just like before in the terminal, we have hello app, very very nice. We also maybe want a little space between here, we can actually just call print with no arguments, and I will just do a new line. Now, the next thing that we did in our app was we actually asked the user what their name was. So we could actually get down to the system input output streams and directly work with those, but in Python there is a simpler way. We can just say input, now this also takes a string, and we can say something like "what is your name?" put a space and then, in the console the cursor is just going to stop right there and wait for the user to put some inputs, so let's just going to run that, I can click this, or this or I can say control r "^R", in Windows, I believe that's F5, and on the Mac it's control R "^R", and down here you can see it's asking what my name is, I'll say my name is Michael. Then, it waits and when I hit enter, it accepts the input and moves on. Now, that's not super helpful because we are not actually getting the name, we are just asking the question. So, let's take a moment and look at a couple of core concepts that we are working with here.

|

|

|

transcript

|

1:56 |

The first concept is around this idea of calling functions, print is a function, input is a function these are reusable pieces of functionality in our application. Here we are calling a function called main, and you can see that you call it with the parenthesis on the end some languages have semicolons to indicate the end of a line, thankfully, Python does not have that, and this takes no arguments and it returns no value so you just call like this. Now, the input on the other hand, we can call it and we pass it a string, kind of like print, but then it returns whatever the user inputted. So we are to create a variable called saying, we are storing the return value from the input function which is what the user typed in the saying variable we can work with that later. So that brings us to variables. And variables in Python are very very simple, they are sort of no-nonsense, so you just say the name, or the word you want to use for the variable and then you assign to it and... SHAZAM!! the variable exists. Some languages you have to specify types and initialization and so on, Python is sort of as simple as it can be around variables. So here we have a variable called name, and we are assigning the value of Michael, we have a variable called age and we are assigning 42, and we can even increment this value of the age, we are doing a "+=" operations, so we are taking the age, adding 1 to it, now the age is 43, so happy birthday to me. We have more complex variables of course, here we are creating what is called the list and we are populating the list with 3 strings, so biking, motocross and programming, and then we are storing that list holding those objects in the hobbies. So, all of these are directly created upon assignment, we can also create variables and store return values from functions, so here we have home address and we call in a function and we are storing the result of that function into home address. So let's go back and use these ideas to finish up our "Hello World" app.

|

|

|

transcript

|

2:14 |

So back in PyCharm we need to grab hold of that value that is returned from input. Let's be really explicit and call this user_text or something like this... ok. So in Python, when you have complex words or variables and functions made up of multiple words, if you will, you typically separate them with underscores. Other programming languages might write "userText" like this, where they use camel-casing, in Python it's all about the underscore. So, we are going to call it this, so that gives us the user text and we could actually just print this out just to see what they entered, so let's run this really quick say "I entered this", if I hit enter it just says "I entered this", excellent. So we have got that value and print it back out. That's not exactly what we want to do though. We want to actually store some kind of saying that we want to say and I believe it was "Nice to meet you", whatever they entered for their name. So we'll say greeting = "Nice to meet you " and we want to sort of somehow incorporate this string here so in Python, they make it really easy to combine strings just using the "+" sign. So we'll say, nice to meet you "+" user text and we can just print out that greeting, give a little space so it's easier to read, so let's just run that, and see what we get. So down here, we can enter our name which will be Michael, hit enter, and it says "Nice to meet you Michael". Perfect!!. So now, we've completed our first app, you can see we've worked with the print function, we're calling it with parenthesis, passing a string literal, it's what you call it when you create them just in place like this, passing these string literals as arguments, sometimes were not, calling the input function, again passing strings, catching the response, the input into a variable called user text, we are concatenating to another string, a literal string plus a variable, storing the greeting and we are printing it out. All right, pretty simple this application but it really gets us off to a good start to start building more interesting complex applications that build on things like file i/o and web services and all sorts of cool stuff like that.

|

|

|

transcript

|

3:35 |

Now, before we move on to our next application let's take a quick tour of PyCharm. So, PyCharm has so many features, it's very hard to just sit down and show them to you. But let's just take a moment and hit the highlights. So on the left over here we have our project, folder, and this can be quite complex, in real apps you might find many subdirectories, multiple files, all sorts of stuff, we'll get to that later. But here we just have our directory and our program.py, and it actually shows the external libraries that are set up into the particular Python environment that we are working with. So you can see that we have things like Pygments, and Chameleon which is a web templating framework and mailchimp for managing subscribers, things like that. Not super important for us now, but this sort of lets you get at your active Python environment. We can also go over to the structure, and the structure for this app is frankly boring, because it just has a few variables and that's it, but if we had functions which were using classes and these classes were defined with more functions and fields and attributes and properties, all this kinds of things, you would see this would actually be quite interesting, you can use all these little pieces up here to slice and dice them. Down at the bottom, we have our run configuration, or a place where we would run our app you can see there is actually two of them running, that's kind of a problem, we'll talk about how to fix that. So we can come over here, we can run it, we can stop the running one, you can see this one as a green dot because it's running, so we can stop this... it's all good. We can also have TODO's so we could come over and put a comment; comments in Python start with "#" hash so I could say, # TODO "this needs cleaned up", something like that and I could say something like TODO. All right, so now if you look down here you can see it actually shows us our TODO's PyCharm highlights them very nicely up here. Take those back out but that's what this section is for. Here is just a Python console you can... as if you typed Python in the terminal or the command prompt, here is literally a terminal, and here is the version control that we talked about. There is support for all different kinds of version control, you can see we are actually working on the master branch in GitHub. Over here, on the right we have a database section, some really excellent database management and design and querying tools built in the PyCharm. We don't have a database that we are working with right now, but if we did, we would connect it here and like it do all sorts of cool stuff. Finally we have sort of run, debug and manage version control shortcuts up here. We come up here, we can look at the configuration you can see we can actually pass parameters like if I want to just hard code a name and pass it like this, this would be passed to our program when it runs, we are not going to do that but we could you could pick which version of Python you want to run pass additional options to the interpreter itself there is all kinds of things that we can do here. Remember we had two instances of that app running that was kind of weird so we can check off this single instance only and that actually fixes that problem, so you only ever have one running at a time. Now when I run it, if it's running and I try to run it again it will say we are going to restart it for you, so I often say don't bother me with that just run it again whenever I ask, ok? So like I said, there are many things you can do with PyCharm, stuff with version control, stuff with managing virtual machines, all kinds of stuff that we just don't need to get into right now but what you saw should give you a really good jumpstart so you can be effective with PyCharm right away.

|

|

|

|

19:15 |

|

|

transcript

|

2:15 |

Hello and welcome to app number 2 this time we are going to build a game, a guess what number I am thinking of game, and it's going to look like this. The program will start up and it will randomly choose a number between 0 and 100 and then it will interact with the user going back and forth until they figure out what that number is. It will ask them to enter a number and it will say, no, that's too low, no that's too high, and then finally you can see the user was able to figure out, hey, 71 was the number the program selected. So, this is a pretty straight forward app, but you are going to learn a lot while we build it. So what specifically? Well, we are going to focus on boolean statements and switching between code blocks. So we are going to start up by talking about boolean expressions, things like x > y, y is not nothing, the users entered some valid text those kinds of things. And we'll use that in two basic places, one is going to be an if, else if categories so if the number is greater than the one you guessed you want to print one thing otherwise you might want to print something else like the number is too high no in other case print the number is too low but also in while loops because we want our app to go around and around until the person, the user has selected the right number they've guessed the right number. We are going to use a conditional test to keep going until the numbers match. Closely related to this boolean concept is something I'm calling truthiness. You'll see that objects within Python are embued with a truth or falseness we'll leverage that as a key building block in our boolean expressions. We'll also learn about type conversion users will enter text but we need to actually work with numbers. Previously we composed strings by using the plus symbol but Python has an extremely rich formatting API and we'll start looking at it here we are going to just get into the very basics of function as a way to compose our app into more understandable and maintainable building blocks and part of that is going to be understanding code blocks which are a little, let's say unique in Python.

|

|

|

transcript

|

4:26 |

So let's jump into PyCharm and start building this game. Remember, our convention is that we are going to start out with the main thing we run called program, so our new Python file called program.py and our app, as the very first thing it had at the top a header so remember we'll do print and we had a bunch of dashes here. Now in PyCharm, if you want to duplicate a line or a selection or a set of lines, you can hit command D in OS X or in Windows or Linux control D. So I will hit command D a few times, it's a little bit of a shortcut. And then it said guess that number game, something like that, let's do some centering, and finally let's do a print, just to leave some space there at the end. Before we go any further, let's just run this really quick to make sure everything is working, again, there is no run configuration up here because this is a new project, so we'll right click and say run, and then all right, a little header is working fine. Now, the next thing we need to do is come up with that random number and the number the computer guesses so I will create a variable called the number and now, somehow we need to... here come up with this random number and one of the great things about Python is it comes with all of these modules and libraries that we can use one of them is called the random library, or the random module Now, PyCharm is not happy, because we need to actually tell Python we intend to use a module that is not what is called the built in this is like an extra part of Python so we need to explicitly state I'd like to use this part of Python this works identical to whether it's something in Python with the standard Python implementation, or something external, like some other library that you've gotten. So we are going to say import, and we just say random, and then we'll say "random." now we have a bunch of options, a little warning went away, the one we are interested in is randint we'll come over here and call randint and if we hover here for a moment or pause for a moment, it will say it takes two parameters a and b. Now, so I would guess that those are numbers, and I'm sure you would as well, and that they somehow specify the lower and upper bound that we are suppose to work with here but is this including those n points, somehow between these n points, and we can check the docs, but it turns out that this actually includes both n points so what we want is 0 to 100. Let me show you something that PyCharm can do for us that really is pretty amazing and one of the things I really like, imagine let's roll this back for a minute, imagine that we don't have this import going on, and we don't have this line, so here we are again and we want to say just like before the number is equal to random. Now PyCharm has a little red light bulb you can see there and it says, Hey, this is not right, but I am pretty sure I can fix it for you, automatically. So if I hit "alt enter", it will come up with the list of options to fix it, so I could actually just hit enter and it will say we are going to import random and there is a couple of ways we could do it but this top one is what we had done before, so I can just hit that, and you could see right at the top it added import, random, now I can type randint and off we go. So, a lot of times when you see an error like this, one of these red squiggles and this little light bulb in PyChram that means that there is some kind of auto fix it's going to propose for you and it is probably worth checking out. Ok, excellent, so we have our number guessed, our number between 0 and 100 inclusive, now the next thing to do is get some input from the user and we are going to just tell the user hey, enter a number between 0 and 100 so I'll call this guess, and this is going to come back as text so I'll be very explicit and say guess text so we'll say input, just like before, guess a number so guess a number between 0 and 100 and we'll just let the cursor stop right there, and we'll wait for that. Conceptually, the next thing that we are going to do is we want to compare the number against the guess text. Now, Python is not a very forgiving about a quality between numbers and strings, these don't really have anything to do with each other, so if we tried to just say print out whether the number was... let's say less than the guess text, if I try to run this you will see it'll actually crash, so if I put in 42, and it says you cannot sort between or compare the order of integers and strings together, right?. You can ask whether they are equal, they will never be equal, but you could ask that question, but you cannot do the type of comparisons that we need for this test So the next thing we need to do is actually take this guess text and convert it into an integer and we'll call that integer the guess so this is super easy, the int class is just built in and we can call it, initialize like this we can pass it some text and then what is going to come out is actually a guess so we could say really quickly print the guess text and you can actually ask for the type of these so let's print those two out and here we'll print the guess and the type of the guess so if we run this two, if I enter 42, you can see, the first line where was guess text is actually a string, "str" is the string class in Python we've actually converted successfully to 42 it looks the same when you print it out but it's definitely not the same type in memory. All right, the next thing we need to do is write some conditional statements with if and else statements and some comparisons and so on in order to determine whether we have won the game the guess was too high, too low, that sort of thing this is one of our core concepts from this application so let's go dig into that.

|

|

|

transcript

|

2:39 |

One of the fundamental components of any programming language is the ability to make decisions, decide to go down one path or the other based on some tests you can run. And in Python, this is the if, elif, else block, so let's look at this co de sample we have here. We have some command we are trying to get from the user, imagine this is a command line app you can enter commands to run or execute at this point in our application. So we are going to ask the user to enter either L or X but of course they can enter anything, right, that's just open text they are going to type in, so we also account for that. We have these two commands we are going to work with, let me say if the command is equal to L the string L, the way you do equality in Python is double equal so command == L then we are going to indent and run some code we are going to list the items if it's not that case that it's L, then we want to do another test, we'll say well maybe if it wasn't L, else if, which is abbreviated in Python to elif, the command == X so maybe the command is X, in that case we're going to call exit and if it's neither of these two things we expect, well we can just print some message like sorry I don't know what you meant by whatever it was you typed in this is not a valid command. So these conditional statements always start with if, and then they optionally have an else if and they optionally have an else. And of course, this is a key building block for our game to decide if the number is too high or too low. So, we've seen that you can do equality test, ==, we can also do less than "<", greater than ">", these types of comparisons that are pretty conceptually straightforward. But in Python, you can test any object, anything that is a variable you can put into an if statement and it will either evaluate the true or false, and there is a simple set of rules around when a thing is going to be true and false a lot of code in Python leverages this concept for conciseness which I am calling truthiness. So the rules are like this, there is a set of defined things that are false, obviously the word False capital F is itself false, but also collections, lists, sets, dictionaries, and so on that are empty, by the fact that they are empty, that considers them to be false. Strings, treat the same way, for all sorts of numbers, if the number is 0 then it is false, otherwise it is true. And of course any object can point to an actual thing or it can be pointed at nothing, in Python we call this None, other languages call it null or nil, in this case, when you have a variable that points in nothing, that is also false. So, if you are not in this short clear set of things that are defined to be false, then it evaluates to true. So we'll see a lot of code leveraging this concept around loops, around conditionals, and so on.

|

|

|

transcript

|

3:14 |

So let's write the test for our code to see if the guess that the user entered is correct. So what we want to do is want to say if, and then write a conditional comparison statement, a boolean statement, so we can say if the guess, not the text remember, the actual number is less than the number, then we want to print something out and for defining these if statements and all sort of code blocks into Python we use a colon to indicate we are about to define a block of code that is going to run in some situation maybe it's a function, maybe it's a loop, in this case, it's a block in our if statement. So when it's true the guess is less than a number, we are going to print out something like too low, the guess is too low, the guess is lower than the number the computer came up with. So that is one part, next, we are going to ask else if it's the case so we'll say elif again, if the guess is too high, if it's higher than a number we'll print too high, like so, and notice, when I hit enter, when I type this block, I will just go back for a second, if I type x and hit enter, or complex or whatever, PyCharm wants to correct it for us and I say test and I hit enter, it goes back to the same line here, but because PyCharm knows about the structure with colons, if I go and I type something like if x: enter, it automatically indents for me, so you will see these Python intelligent editors like PyCharm make it really easy to write code correctly. So we have if, indent, print too low, else if "elif" guess is too high, print too high, and the last thing I want to do is say else: and we'll print you win. So there is a few more things we are going to need to do to make this work just the way we'd like but let's go and run it and see how it's working. Ahh what's a good guess?, 54 it must be 54, too high, oh, well the way game is supposed to work it's supposed to say too high, now you've got another chance. So what we actually need to do is go around and around, and that means we need to find something called a loop, and the type of loop that work really well for this situation, in Python is something called a while loop. So you say while, and then some sort of condition goes here that you want to test, and as long as that is true, you are going to go around and around and around, so we want to say long is the guess is not a winning guess, we want to keep going around so we'll say guess, you say equals like this, remember, and if you say not equals you say exclamation equals != So now we'll say colon, we need this whole if statement/else if part to run, while this loop is going, and the way that we do that in Python is we indent it so I can highlight in PyCharm and hit tab and it is going to indent everything four spaces for us. And then when we are no longer indented, if I print something down here, done like so, you can see this is actually out of the loop. Now, if I run this something really bad is going to happen, we are going to end up in what is called an infinite loop, because, we get one piece of input here from the person we convert it to a guess let's say it's 52, who knows, whatever, and when we go in here and unless you guess the winning number, it is going to go around and around and around either say too low, too low, too low, or too high too high, too high, and it's never going to give the user another chance to enter this text. So what we need to do is make sure that we ask this question, and do the conversion within the loop. Ok, so remember intend, so it's part of the loop, we'll talk more about that in a moment, so when it get the number, and then we are going to convert it and so on. Now, you can see PyCharm has this sort of highlighted as an error and it says guess cannot be defined after it's used. So basically we need to give some kind of value for the initial test so we get into this loop at least once so we can pick something like negative one or something that will never be a winning guess, that will make sure that we at least run to this scenario one time, now let's try it. 54- too high, 40- too low, 45- too high, 43- do I feel lucky- YES!!!, I was lucky, awesome. So, that is how the game works, we can do a little bit nicer stuff but let's take a moment and pause and look at this concept of this indentation and defining code blocks that run by using indentation. This is one of our core concepts that we are introducing as part of this app.

|

|

|

transcript

|

3:12 |

A core concept of this application is to understand the shape of a Python program. So far, until very recently, we've been just writing straight top to bottom code, with no blocks, no functions, no conditionals or loops or anything that has to be disambiguated in that sense. And now, what I want to focus on is this concept of a shape of the Python program. Everything you see on the screen that is in the square, the gray square, the blue squares, the purple squares, those are all separate code blocks, sometimes called code suites in Python that are executed in some particular manner. The blue blocks are functions that are executed when the functions are called, the purple blocks are if or else blocks that are executed depending on whether the argument is batch or something else. The key thing that defines code blocks in Python which makes it quite unique in this regard, is white space. Many C derived languages use curly braces, think of Javascript, C#, Swift, those types of languages, they all use curly braces and when you open the curly brace that begins a block of code, and when you close curly brace that block code is now done. It doesn't work this way in Python, it's all about the indentation, so you can see the gray block which is just the overall program and we have two functions we are defining, we have def main and we say colon, and then we indent all the body of this function. And that body is made up of those two blocks in there, there is two purple blocks sort of the if else statement. Then we unindent and we define another run function the details don't matter, we say colon and indent again and that's the body of the function for a run. So you've already seen previously that editors like PyCharm and other Python intelligent editors know about this shape, they know about this code blocks and if you type if something colon enter, it will automatically indent and stay intended until you unintend it manually. While it might seem a little challenging to use spaces to define a structure, it turns out the editors know all about it and make it super easy. One more comment I should add while we are talking about indentation or white space, tabs? tabs are not a good idea, in Python, Python does not like tabs. This white space should be actual the space character, not tabs, now that doesn't mean that you don't press tab in your editor like in PyCharm if you hit tab it will indent but it actually just means it's going to insert four spaces for you and many of the Python editors are like that. So if you are coming to Python from another language that does not use significant white space like C++ or some of the C based languages this could feel a little strange to you and it does take a week or so to really become super comfortable with this idea but once you do it's very natural and it's a lovely way to program but I know that some of you out there you are thinking WOW! this is really different and to you I say just give it a try, use a nice editor and you will start to love it right away. if this feels totally natural and good to you, well then you are going to love Python right from the beginning.

|

|

|

transcript

|

3:29 |

So we have our app working but the user feedback could be a little better let's go down here and try to improve this message we sent to the user instead of just too low, let's say something like your guess of... and we can put in this number this guess they had here we saw that we could do this by combining strings by saying guess so maybe you'll think well this is a way I can do this here and this will just combine it that does work in certain languages say your guess of let's say 70 was too low, something like that. Now, PyCharm is kind of giving away the surprise here, but this is not really love that says the string int conversion with plus is not going to work out so well for you So let's just run it and find out. So if I enter something that is going to be for sure too low like 0, let's see what happens, you get this exception, you cannot convert int object to string, implicitly. Well we technically could explicitly convert it but it turns out that there is a much better way in Python. What we want to do is we kind of want to put something into this space, now the way we can do this nice way is we can use these little curly braces inside the string and then call a function on the string called format and pass whatever we want to go in there. And so, into this location we can say we'd like to take the guess and explicitly convert it to a string, whatever that means, this time it's a number, so that is pretty straightforward, and then we are going to put the result of it right there. We can actually pass many parameters here, we could have something like name, like let's change this really quick name=input player what is your name and these little numbers that go in here talk about the order in which there is specified in the format method. So guess is first, everything is zero based, so it gets a zero, name is second, so it's {1}, so we could say sorry {1} your guess of {0} was too low. Now let's go and run this, see if everything is working, enter your name, my name is Michael, enter a guess, I am going to put 0, sorry Michael your guess of 0 was too low. Now, we are just scratching the surface of the power of this format method. We can if we wanted to switch the order here, if we specify the place holders in the string in the same order as they are specified as arguments there we can actually omit the number now this will say sorry name your guess of... whatever the number was, was too low. We can put in format specifiers here to say I'd like to separate this with commas or convert it to currency, or all sorts of sort of format specifiers we can take dictionaries and name these things and pull items out of the dictionary by name and then put their values in here, it's very rich, but let's just go with this for now. So we'll do the too high case here, so your guess was too high, and finally, we'll just say something nice about winning excellent work so and so, you won it was something like this, right? so excellent work, whatever the name is and you won it was whatever the guess was. So, let's play the game again, all right, what's your name, now I've got to enter my name, it's Michael, I am going to guess 50- wow 50 was too high, 25- too low, 35, 40, 45 47, what do you think, 46, chances are likely, YES! excellent work Michael, you won, it was 46. See how we can use string format to really simplify and clarify how we convert values into strings.

|

|

|

|

22:06 |

|

|

transcript

|

1:35 |

It's time for app number 3, this time we are going to write an app that'll answer the question how long until your birthday. So what exactly are we going to build? We are going to build an app that looks like this it's going to have a little header saying birthday app like all of our apps do, and then it's going to first ask a couple of questions of the user when were they born, so ask the year, month and day, and then pull those back, those will come back as strings, we'll convert those to numbers, and use those numbers to actually come up with a date object, that represents a particular time on the calendar, and then we'll do a little math with that date, relative to today, and we'll use that to answer the question how long is it until your birthday and if you have already had your birthday we'll say hey your birthday was so many days ago. So again, that looks pretty straightforward, but we are going to learn a lot. We are going to come back to working with functions and organizing our code into small, reusable pieces of functionality and in our previous example, we saw that we worked with functions but they were sort of the most basic, they were just things you would call to have actions and here we are going to have functions that both take parameters and so we can supply different values to them like a particular date and the return values, the things like how many days from this particular date until now. The other major thing we'll focus on is dates and times and time spans, so when you work with dates and times between dates, in Python these are the 3 basic concepts that you'll see and they will be central to this app.

|

|

|

transcript

|

4:25 |

Over here in PyCharm, let's start working on our birthday app. So as usual, we are going to come over here and pick a new Python file, and call it program. Now we are going to start out by having a header, then the next thing we are going to do is we are going to ask the user for their birthday information, and then we are going to compute the days between now and that birthday they entered, and then finally we'll tell them whether their birthday is coming up how many days, that sort of thing. So we could just write this out in one giant series of events, but I find it much easier to think about this in small little steps, kind of like I just described, so we can do that with functions. So we are going to start by actually defining a function and the purpose of this function is to get the input from the user and return it any time that we need it. So let's say get_birthday_from_user(). So remember, to define a function it always starts with a keyword def, and then the function name, any parameters if it takes none, you just have open close parenthesis and a colon. And then you indent to define the code block that is the function body. Notice when I hit enter, PyCharm automatically took care of that for me. Now, one thing we can do is we can just kind of sketch this out and if I want to define the function but not really implement it or write the details of it here, I can just say pass, right? So the other thing that we are going to do is we are going to compute the days between two dates so we'll say compute_days_between_dates(), all right, and all right pass again just so we can go on and sketch out the rest of the app. Now notice, PyCharm has put a little squiggly into here and there is a warning that says this is a PEP 8 violation, now PEP 8 is the standard for how code should be written in Python, you don't have to follow it but pretty much everybody does for the most part, and, one of the rules in PEP 8 is that when you have two functions that are stand alone functions, there is supposed to have two lines between them. PyCharm actually knows how to fix many of these and I can hit command+alt+L and it will do whatever it needs to fix it. Now the next thing we are going to do first we are going to get the information from the user, we are going to compute the days and then we are going to say def print_birthday_information(), something like that. And again I am going to pass... and there is one part I kind of omitted because I don't really think of it as an operation, but it is something we are going to do, is we are going to print the header, and let's just put pass really quick. So that is kind of the steps of our app there we are going to print out the hello, welcome, get the information from the user about their birthday, compute whether that is in the past or the future, how many days and then we are going to use that to print out the information. The last thing to do is actually just orchestrate this into a main method, so we'll define a main method, here some programming languages like C++, C# and so on, they require you to actually define a main method called main, there is nothing like that in Python, this is just a convention. So, over here, we are going to first start by printing the header and then we are going to get the birthday information from the user, we are going to store this in a variable but just hold on for a minute there, we are going to compute the days between the dates, and then finally, we are going to print the birthday information. And then we'll be done. Final thing is we are going to be returning some information from this, we are actually going to return a date object that represents the real birthday, so we'll call this b-day here, and this birthday we are going to need to pass off to this function, so we have the birthday, and we are also going to need to know what time it is right now and I am just going to put none because I don't really want to talk about that what that is just this moment, but we are going to get to that right away, so we are going to call get birthday from user, get their birthday, we'll compute what the time is now, we are going to compare the times between these and this is going to actually give us number of days, like so, and then we are going to pass this on here and we will print this out. So you can see that PyCharm is actually showing us that hey, you are trying to use the return value of this function, and over here you can see we just say pass they are not actually returning the value. So our next job is to go and implement each one of these functions, so this flow works as we expect.

|

|

|

transcript

|

6:04 |

With the general's skeleton or shape of our app in place let's go and fill out some of these pieces simplest want has to be this print header, right it's just going to look something like this, the title right the middle and then one more there's so we have our little stamp now the next thing that first thing really we're going to do is get the birthday from the user so we could ask them for inputting like a date, you know, year slash months last day, but then you've got different cultures have different styles, right? Like europe versus the u s we arrange things differently and then the partisan it's hard, so let's just keep it real simple like this, we'll just say, when were you born and we'll ask him, what year month and day separately we can use input for that so we can say year equals we'll give him a little hint here with this why? Why, why? Why now? I could copy this line for the month or i could just retype it, but in PyCharm you can actually hit command d and will be duplicate whatever you have selected if there's nothing like that will duplicate the line so it's really nice we just say month or here and update this and then again for the day some like that ok, so now we'll have the information when the person was born this is not gonna work exactly want to come back and fix this? But before we do let's go a little farther to see why next to one i dio is work with dates. You know, just like when we worked with requests, we have to import this module so wouldn't go up here. I want to say import daytime so this is a built in a library in Python you have toe install anything extra to get it it's just right there, but usually have to import it. So now we come out here and say something like that a birthday i say b day some like that equals when come to date time here and now there's a few options what we can do, we can work with date and time so, you know, year, month, day, hour, minute second type of thing or we could just work with calendar dates and there's some other options here as well. So we come in here if we want to work with daytime that includes the hours minutes, seconds we would work with the time dot date time it's a little confusing because, like, why do you say the same word twice? Well, i don't know that's just how they designed it this is the module and this is a class contained within their which we can do things like love here and say what's, you know, set the year set the minute things like that, we don't care about the time we're trying to compare days, not actual moments in time, so what? We're going to work with dates here? We could also we just cared about time you could work with that and what we're also gonna work with implicitly, we won't create one of these, but you'll see it, it will be created. But to sort of behind the scenes force is this thing called a time delta, which shows you the differences between two dates that's going to be important, so we're going to work with data now we're going to try to create the date that they've given us here, so we'll say, and our year, month and day, right? So that looks like it's fine. The next thing we want to do is give this back right that's the purpose of this function was to come down here, get some, do some or ask the user for this and then actually give back the birthday. So what given stages for term b day and down here or going teo, you just change his name really quick just so you can see they don't know no matter from like that so we couldn't hear, and we'll return this from this function that we're calling out here and storing this value and use it later in this section here. So this almost works now noticed pi charm is highlighting the stuff, and it has a reason for it. But let's, go and run and see why he's going to actually be too interesting outcomes here, first of all, well, let's, we can't run it because there's no run configuration, so we just right click and say run program, and you might expect to see things like this printed out in that and so on . But remember, there's no convention what this main method being called has just happens to be when i called it so in order for this to actually get triggered to do a thing, we have to come in here and call it now. This is not really the best way to trigger this function to be called there's a better way, way we'll get to that another video a little bit later, but for now, let's, just do it like this so we can get it to work, and then we'll talk later about how to make it better ah, there we go, birthday app, when were we born nineteen, seventy for april fun who that doesn't look very good that's not it's not a date i was looking for right so what happened here it says we had a type air that imager is required but what we got was a string if i click here to actually take us to where the problem lies who this is the thing PyCharm had highlighted it said that you expected editor but you're giving me a string and the reason is everything that comes out of input is always a strength so we can convert it to a number by just passing that to initialize or for and so when its rapid like this if you wanted a float we would do like that but we don't want imagers on something down here okay now we should get it to run past this problem it's going to crash in another place but that's okay we'll get there well we're making our way down to nineteen seventy four april first and now we get this compute dates is not ready that's over here but noticed this part works so let's we're not pass it we haven't configured these functions to take those primaries yet so it's put that on hold for just a minute let's just print out what we got here we put out our birthday when we were born in one more time anything said before april first boom looking at nineteen seven for april first so we've gotten a little ways down the path. In our application, we've gone to the user, and we've asked them a couple of questions. And we've taken a input and converted it first in numerical values and then to date values. So we're off to a good.

|

|

|

transcript

|

6:18 |