|

|

|

18:39 |

|

|

show

|

0:50 |

Hello and welcome to Data Driven Web Apps in Pyramid.

In this course, we are going to take the Pyramid web framework which is a Python-based web framework and we're going to build some amazing data driven web applications.

We're going to use Bootstrap which is one of the most popular CSS front end design frameworks to make our web app we build in Python look great.

Of course, almost all web applications access databases.

At least the dynamic ones do.

And we're going to use the most popular and powerful Python-based ORM called SQLAlchemy to write Python classes and map those to our database.

Welcome, welcome, welcome to Data Driven Web Apps in Pyramid.

We're going to have a great time building some real world applications and learning some real world ways to put them into production.

|

|

|

show

|

1:46 |

Before we dig into all the code that we're going to write and the technologies we're going to use and the types of things we can even build let's just take a moment and admire the incredible power of the web.

The web is one of the most flexible and certainly the most widely deployed and used technology out there.

Think about YouTube.

YouTube is an amazing web application.

It really has brought video sharing to the entire world.

It also happens to be a Python-based web app and handles millions of requests per second.

Think about Airbnb.

With this web application, and a unique sharing philosophy they've literally transformed the way people travel and stay in cities.

Netflix uses their web app to redefine TV for many, many people.

In fact, this web app and their infrastructure account for 35% of the bandwidth of the United States in the evening.

That's pretty incredible.

Hey, let's throw one more in.

You're taking an amazing course and you're going to be learning all about building web apps.

Here's another one, Talk Python Training.

This site is built, of course, on Python and Pyramid and has taught a ton of people about Python.

In fact, many of the techniques and the lessons learned in building this site along with some of the others I'm going to bring into this course.

So it's not just about looking out there and seeing some incredible web apps built it's also taking a pretty amazing web app that I have built and taking the lessons and techniques from that and sharing them with you with Pyramid SQLAlchemy, and so on.

Do you have an idea?

Something you want to build for everyone?

Well, the techniques and technology you're about to learn will let you unleash this incredible power of the web to bring your idea to life.

|

|

|

show

|

3:08 |

In this course, you're going to learn what's sometimes referred to as full-stack web development.

Now, we don't spend that much time on the JavaScript side of things but everything else we do spend a fair amount of time on.

So, what the heck is full-stack anyway?

Let's look at all the pieces involved in a typical web application interaction.

We have a browser we have our server running out on some cloud hosting we have usually a virtual machine or set of virtual machines in the background and our database we're working with.

So, a request is going to come in.

It's going to magically find it's way through the internet to our server.

Our server is going to do some processing talk to the database, and so on.

Where's the full-stack part come in?

Well, let's look at the technologies involved here.

On the server side there are a bunch of different technologies you need to learn.

We're going to learn Python.

Well, we're going to use Python at least and we'll probably learn little bits of it along the way but we're going to focus on using Python along with Pyramid the web framework that we're going to work with.

The dynamic HTML templates in this case Chameleon.

The data access layer SQLAlchemy.

In order to deploy and configure our servers we're going to need to know a little bit of Linux.

Learn that.

We're going to use Nginx and uWSGI.

These are two cooperating web servers that we're going to with to run our Python code in Pyramid.

Then we're going to talk to the database.

Well, what do you need to know for that?

Well, maybe this is Postgres or MongoDB.

For the course, we're actually going to use SQLite and also a little bit of touch of MongoDB at the end but it's easier to switch to something like Postgres.

You many need to know the query language to get to that like SQL, or MongoDB's query syntax it's like a JSON type thing.

We're not going to actually focus much on the query syntax because we're going to use SQLAlchemy which lets us sort of abstract that away but you would more or less need to know that in practice.

You also need to know how to migrate or manage your database over time as it's structure changes.

Look at all these technologies just on the server side.

That's a lot to learn and this whole stack of technologies put together is part of what's called full-stack but there's more to it.

Over here on the browser side we have HTML and CSS we have to deliver to the browser, right?

That's ultimately the goal is to show stuff on the screen for the web browser.

HTML and CSS, we're going to do some work with that.

You typically don't want to do the design from scratch so you'll pick a front-end framework like Bootstrap.

Maybe you're even writing some rich JavaScript apps with say, Vue.js.

Those are grayed out, because like I said we're not doing much with JavaScript but these are the technologies that you might put into play for a full-stack web application.

The web can be a little bit daunting.

We're going to go through most of these technologies.

All of the white highlighted ones in this course and you'll have concrete small examples for each one.

In the end, we'll have it all put together in a full-stack Python-based web app using Pyramid SQLAlchemy, and Bootstrap.

|

|

|

show

|

1:32 |

This is not an introduction to Python course.

We do assume a little bit of working knowledge.

We assume that you have basic Python programming skills.

I would say if you're, what you might consider an advanced beginner, you're totally good to take this course.

We're going to use things like classes and decorators and functions and so on but we're not going to describe what a class is or describe what a function is or things like that.

So make sure you have some basic working knowledge of Python in order to take this course.

If you don't, consider taking my Python Jumpstart by Building 10 Apps course.

That's all about learning Python.

So here we assume that you know Python.

We also assume that you know a little bit of HTML.

That if you look at HTML, at least you can make sense of it you know what, say, an attribute is what a class is, things like that.

We're not going to be doing too much advanced stuff with HTML.

I'm not sure if there is such thing as advanced HTML but we're not going to be doing too much with it but of course, this is a web class and we're going to be doing a lot of HTML but I assume that you can work with standard markup.

Similarly, we're going to be using CSS and I'll talk a lot about what the selectors and classes and design mean, and especially when we get to the bootstraps section and applying that to our website.

So we're not going to assume you know tons of it but we're not starting from scratch with CSS either.

So basic HTML, CSS, and Python knowledge that's what we assume you're starting with and then we're going to build our full-stack web app from there.

|

|

|

show

|

3:44 |

When we talked about the incredible power of the web, we saw some amazing web apps built.

Only some of them were built with Python.

Let's look at a whole bunch of other apps web apps, built with Python.

I think it's always great to have some examples.

You know, maybe you're trying to convince your team or your boss, or something like that.

"Hey, we should build this particular app we're trying to launch in Python and here are five examples of other apps that are similar, that are amazing." So let's go through a quick list to give you some ammunition.

You might be familiar with The Onion.

This is a fake news comedy site that's hilarious.

They post all sorts of crazy, outrageous news items and that, of course, is built on Python.

Spotify, the music streaming service, their web app, Python.

NASA and JPL make heavy use of Python for all their awesome space stuff.

Bitly, the URL shortening site that gets tons of traffic does all sorts of analytics and stuff around the URLs they shorten, Python.

Bitbucket, SurveyMonkey, Quora what I think one of the very best online Q&A forums for thoughtful, somewhat deep answers.

Not always great but pretty good, built with Python.

They're actually pretty enthusiastic users of Python.

If you look at their engineering blog they often write about how they use and configure and optimize Python for what they're doing.

Disqus the comment section that you can embed into any web application.

If you go to either of my podcast sites, talkpython.fm or Pythonbytes.fm, you'll see at the bottom this little Disqus section.

That is built with Python.

Many of their services are based on Python.

Instagram, an incredible amount of traffic goes to Instagram, and they make heavy use and, again are very passionate users of Python and their engineering blog covers a lot of cool things like how they disabled garbage collection, for example to make their web apps run much faster.

Reddit, the front page of the internet, as some say gets tons of traffic, I think it's in the top ten websites on the internet built with Python and SQLAlchemy.

Youtube, we already talked about that.

Youtube is amazing, they get millions of requests per second and are based on Python.

The brand new relaunched PyPI, the Python Package Index at pypi.org, is built with Python and Pyramid.

They get tons and tons of traffic and they actually tried Flask, they tried Django and they tried Pyramid and they decided Pyramid was the best choice for what they were building.

Pinterest, also built on Python.

Paypal has some of their pricing services written in Python, and these pricing services are called by different parts of Paypal's infrastructure and other services and websites to figure out what the exchange rate is, what the fee is that goes along with various transactions and so on.

That has two to three billion requests per day written in Python with sub-millisecond maybe just single millisecond response time.

It's pretty incredible.

Dropbox is a heavy user of Python, the client you get on your machine, as well as much of their backing services, Python.

In fact, Guido van Rossum, the guy who created Python, works there at least at the time of this recording so you can bet that it's a real center of the universe around Python.

And, last but not least, Talk Python.

All the Talk Python stuff, the training site the podcast site, all that stuff is based on Pyramid and Python.

It's been working out amazing.

We get incredible, high-performance web apps that are easy to maintain.

We'll share a lot of the lessons from that experience in this course.

Want to read more about all of these how these companies are using Python?

Well, how about we use a little Python to get there.

Bitly/pyapp-25, that'll take you over to an article where I pulled a lot of this stuff together with more background info than I put in here.

|

|

|

show

|

4:50 |

In case you haven't already scanned the table of contents to figure out what we're going to cover let's really quickly go through some of the topics that we're going to cover in this course.

After this introduction section we'll briefly cover what you need to set up your machine and the tools and editors you need to follow along.

We're going to introduce the Pyramid web framework some of its design principles and compare and contrast it with other popular frameworks.

We'll create our first site.

Here's where we start writing code in this Chapter 4.

We're going to create our first site and get it up and running in Pyramid.

We'll look at the various options for HTML templates and then, we'll dig deeper into the Chameleon template language.

If you want to use another one, that will be totally fine but I make a case for why I think this is the best HTML template language out there.

We'll see that mapping URLs to methods in our web app is a central part of the MVC framework, the Model-View-Controller framework that is in Pyramid and popular among many of the Python web frameworks.

So, we'll focus on taking a URL and getting it to run a function and getting some kind of response.

Once we have some basic pages up and running we'll look at how we make our pages beautiful.

We'll bring in Bootstrap.

Also talk a little bit about some other front-end frameworks if you want to use something other than Bootstrap but we'll look at Bootstrap and how to bring that into our web app.

At this point, we'll have basic web app functionality happening but it'll all be based on fake, in-memory data.

Of course, we don't fake, in-memory data.

We want live, full-stack web apps.

So, we're going to move down a little bit in that stack towards the data access layer.

We'll talk about SQLAlchemy, how the ORM works we'll define classes that map our data into our database, and, of course run queries to get that data back.

See, the SQLAlchemy ORM is great for creating the initial database structure but if it changes, things are going to go crazy.

Right, our database is going to be out of sync with our ORM classes and you'll see that's a full-on crash.

It's really, really bad.

It's kind of hard to maintain the stuff.

Well, Alembic, a database migration framework that's peered with SQLAlchemy will come to the rescue.

We can point Alembic at our SQLAlchemy classes and our database, and go, alright.

We changed these.

Make the database scripts that are needed to do that change, to apply those changes automatically.

Just make that for us and then we can apply that to like say, staging or production as we roll out new versions.

It's going to be great.

Once we have our database working we'll want to accept user input.

Probably save it to the database, as well.

So, we'll talk about HTML forms letting users create accounts register, enter data, that sort of thing.

If you're accepting data, you had better be validating it, right?

The internet is a harsh place and lots of people try to send invalid data either by mistake or maliciously.

We're going to talk about some really awesome design patterns called view models for server-side validation and data exchange and then, we'll also talk about leveraging some HTML5 features so we don't have to write any JavaScript for client-side validation.

Testing web apps can be tricky because web applications depend upon things like databases, and other services and the web framework, itself, right?

Like the request-response model, all that.

So, we'll see that Pyramid provides special infrastructure to make it testable so that we can test our web application more easily without actually creating the servers and doing heavy weight stuff like that.

Once our app's built, tested, everything's working, it's time to ship it, right?

Put it online, so we're going to take our web app and deploy it on a brand new Linux server out on the internet and we'll have our web app running and live.

You'll see everything you need to do to take a bare Ubuntu image, and get it up and running to be our web and database server.

Finally, we're going to take a look back at how we built our application how we've structured it so that we have a lot of flexibility using various design patterns for accessing data and validating data, and so on.

And as an exercise, we're going to convert from a relational database to a MongoDB database.

That's interesting, if you want to know about MongoDB, you'll see how to do that but it's also interesting just to show how flexible these design patterns are because we're going to make this conversion by just changing a few handful of files even though there'll be many, many aspects of our site.

We'll have that really isolated and focused so it's super easy to do whatever we want with our data access.

If you wanted to, say, convert it to calling web services and some kind of micro service architecture you'd see the techniques we're using here for MongoDB would equally apply to that exact same transformation.

This is what we're going to cover.

I hope you find it super interesting.

It's a real world version of what you need to build and ship web applications.

This is almost everything you need to know to go from I know a little bit of Python and HTML to running these full-stack web apps that are things like the training site or YouTube, or stuff like that.

I hope you're excited to learn it.

I'm definitely excited to share it with you.

|

|

|

show

|

0:44 |

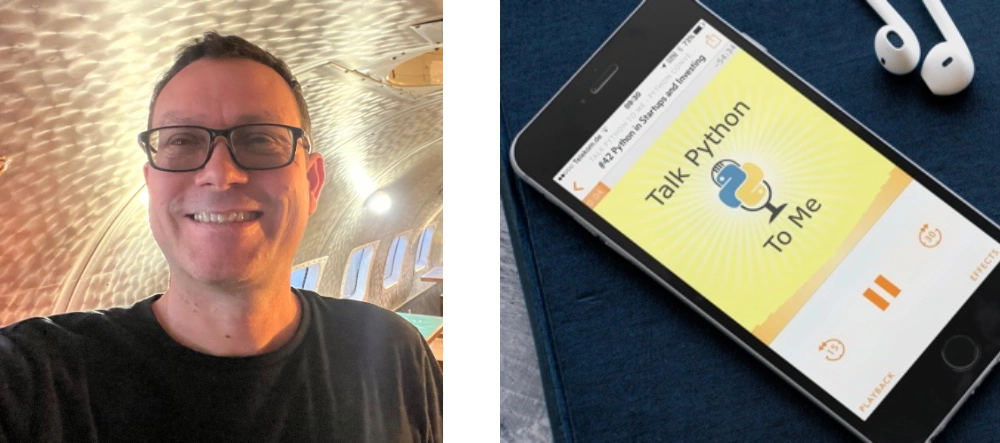

Now, before we get to the rest of the course, let me just quickly introduce myself.

Hey, I'm Michael, feel free to reach out to me on Twitter where I'm @mkennedy.

I've done a number of things in the Python space, one is create the Talk Python To Me podcast, where I've interviewed a lot of people who were involved in these various technologies, Mike Bayer from SQLAlchemy, Chris McDonough, the founder of Pyramid.

I'll talk a little bit about some of those episodes when we get to the right place and how you can learn more.

But I started and run the Talk Python To Me podcast.

I also run the Python Bytes podcast which is sort of a news one.

Check that out.

And of course, I'm the founder and one of the main authors at Talk Python Training.

Nice to meet you, I'm really excited you're in my course now let's get started.

|

|

|

show

|

2:05 |

Welcome to your course i want to take just a quick moment to take you on a tour, the video player in all of its features so that you get the most out of this entire course and all the courses you take with us so you'll start your course page of course, and you can see that it graze out and collapses the work they've already done so let's, go to the next video here opens up this separate player and you could see it a standard video player stuff you can pause for play you can actually skip back a few seconds or skip forward a few more you can jump to the next or previous lecture things like that shows you which chapter in which lecture topic you're learning right now and as other cool stuff like take me to the course page, show me the full transcript dialogue for this lecture take me to get home repo where the source code for this course lives and even do full text search and when we have transcripts that's searching every spoken word in the entire video not just titles and description that things like that also some social media stuff up there as well.

For those of you who have a hard time hearing or don't speak english is your first language we have subtitles from the transcripts, so if you turn on subtitles right here, you'll be able to follow along as this words are spoken on the screen.

I know that could be a big help to some of you just cause this is a web app doesn't mean you can't use your keyboard.

You want a pause and play?

Use your space bar to top of that, you want to skip ahead or backwards left arrow, right?

Our next lecture shift left shift, right went to toggle subtitles just hit s and if you wonder what all the hockey star and click this little thing right here, it'll bring up a dialogue with all the hockey options.

Finally, you may be watching this on a tablet or even a phone, hopefully a big phone, but you might be watching this in some sort of touch screen device.

If that's true, you're probably holding with your thumb, so you click right here.

Seek back ten seconds right there to seek ahead thirty and, of course, click in the middle to toggle play or pause now on ios because the way i was works, they don't let you auto start playing videos, so you may have to click right in the middle here.

Start each lecture on iowa's that's a player now go enjoy that core.

|

|

|

|

7:36 |

|

|

show

|

2:31 |

I'm sure you're ready to get in and start learning about Pyramid and building the web apps right away but before we do, let's just take a quick moment and make sure you have everything you need to follow along and write and run these programs.

Obviously, you're going to need Python, right?

This is a Python course and in fact we're going to use Python 3.

Python 2 will go unsupported in less than two years from now so of course we're using Python 3.

However, Python 3 doesn't come on all the systems.

Let's see if you have Python.

How do you know whether you have Python?

Well, you can go to your terminal on a Mac or on Linux and type Python3 -V and it'll tell you either no there is no Python 3 or it'll tell you what version.

When I ran this command it was 3.6 but actually 3.7 just came out so Python 3.7 is the latest.

As long as you have 3.5 or later, you'll be fine.

If you're on Windows this doesn't work.

It's a little frustrating but Python3 is not a command that comes installed with Python by default.

How do you know what you have on Windows?

Well, you could type Python -V and that'll tell you what one of your installations is if it's in the path.

It might be better to ask where Python and it will show you all the different locations and then you can type Python -V and see which one.

For example, on this Windows 10 machine we have the Anaconda 3 Python which is Python 3 from the Anaconda distribution.

We have Python 3.6 from Python.org, and 3.5 from Python.org all in these random, different locations here.

So you can adjust your path to target the one that you want but make sure when you type Python -V you get something Python 3.5 or higher.

Depending on your OS if you type this and everything works you're ready to take this class.

You have Python set up.

But what if you don't?

Maybe you need Python, maybe that didn't work.

There actually a whole bunch of tradeoffs and different ways to install Python.

One way, if you're on Mac or Windows, is just go Python.org download the latest installer, and get it.

But I want to give you a little more guidance.

Drop over at realPython.com/installing-Python and have a look there.

They have for each of the operating systems a couple of different ways.

For example, for macOS, you might use Homebrew to install and update the latest version of Python.

Things like that.

So go over here, see what they say for your OS.

They're going to keep maintaining this and updating it over time.

So this is probably the best resource for installing Python if you need to do so.

Once you get Python set up you'll be ready to move on.

|

|

|

show

|

1:25 |

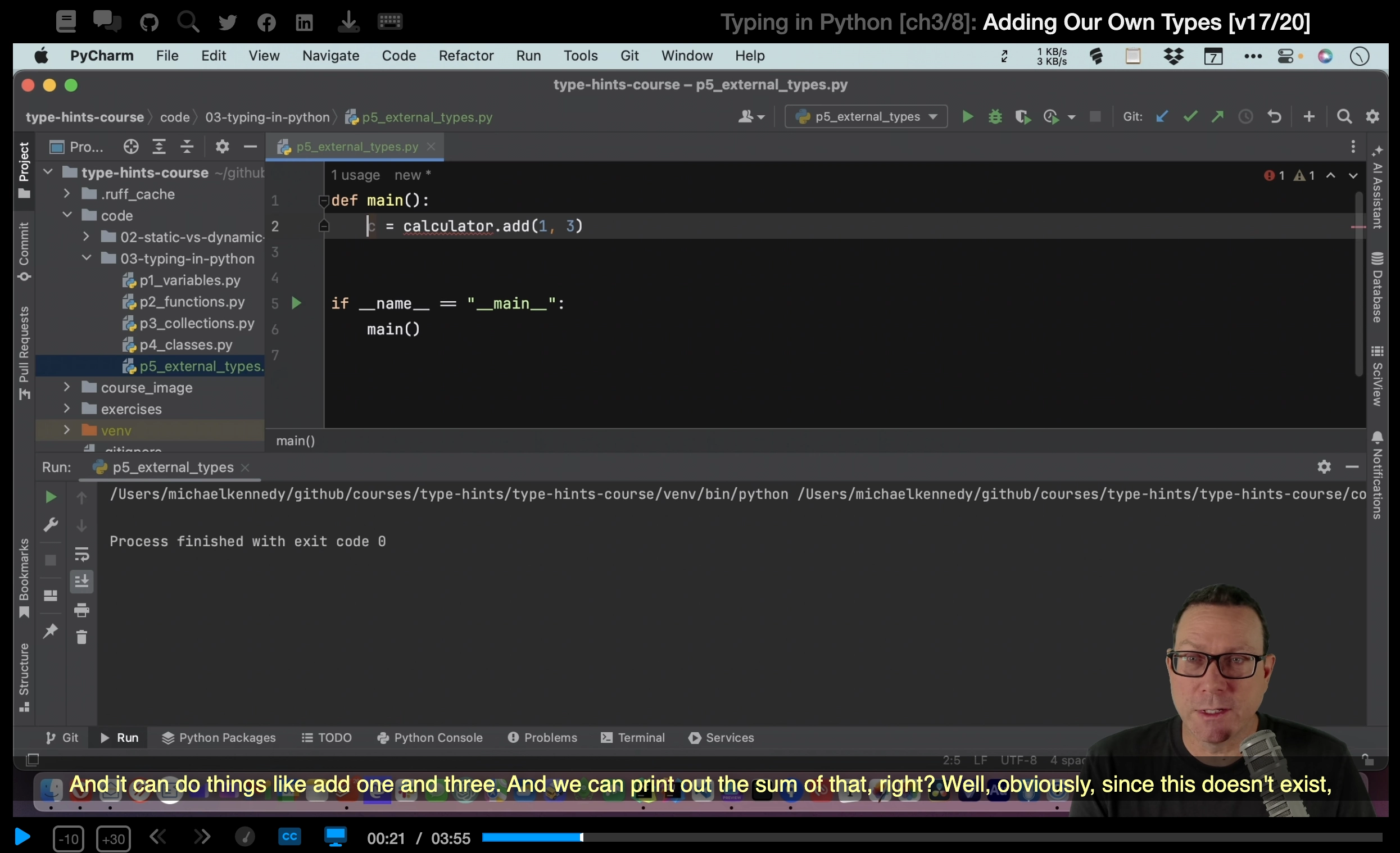

We'll be doing a ton of live demos and almost every bit of code and concept covered will be done in some form of a live demo.

That means we need a really solid editor.

We're going to use PyCharm.

I'm going to use PyCharm for this course.

And you, if you want to follow along exactly should also use PyCharm.

It's in my opinion the best editor at the moment for Python.

If you don't want to use PyCharm for whatever reason you can use whatever editor you'd like.

If you want another recommendation one that's free, the Visual Studio Code with the Python plugin is looking like really the second best option these days.

And it's really good, and it's really coming along.

One other thing about PyCharm they have a community free edition and they have a pro paid edition.

Often, the community free edition is totally good and you can use it for writing all kinds of stuff in Python.

However, one of the paid features is web and database support.

This is a class about web and database programming so as you might expect, you're going to have to have the professional edition of PyCharm to make full use of it with this course.

If you don't have or want to get the professional edition of PyCharm you can follow along with VS Code.

You can sort of follow along with the community edition with a few little hacks to make it run.

But you won't get the full editor to support in PyCharm community.

Be sure to get the right version to get the most out of this course.

|

|

|

show

|

0:30 |

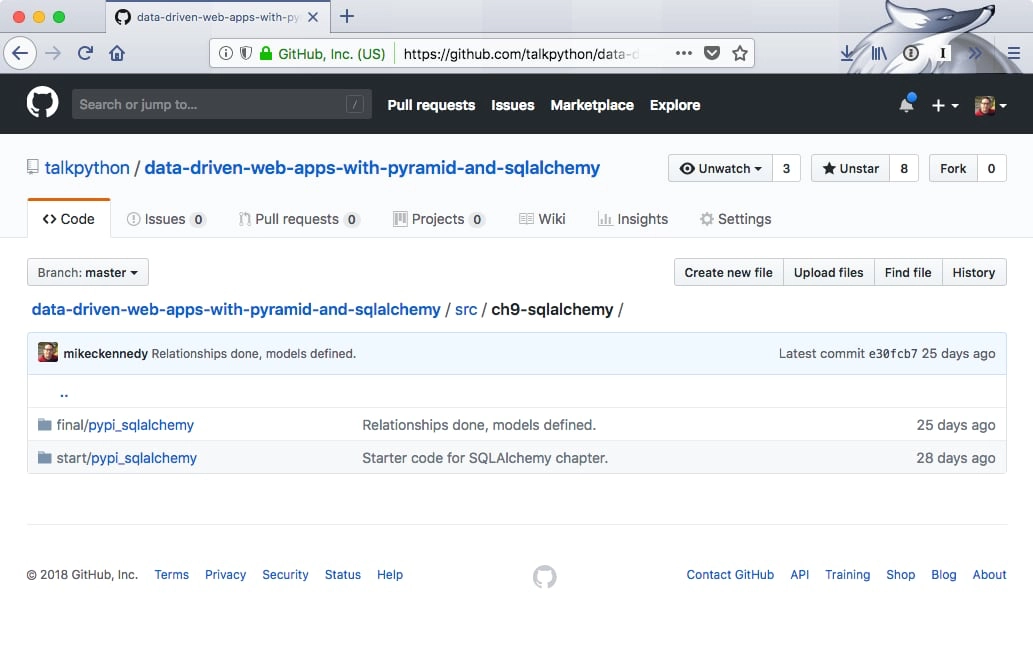

Everything you see me write.

Every bit of code that we create and all the stuff that we generate during this course and even some of the raw data needed to generate that is available for you on GitHub.

So I encourage you to drop over here at GitHub.com/talkPython/ here's a long one data-driven-web-apps-with-pyramid-and-sqlalchemy separated by dashes.

Go over there and star and fork this repository star so you can get back to it and fork it, of course so you have a permanent copy in your GitHub account.

If you don't want to, you can just go to the clone and download button and download the zip.

But I recommend that you link it to your GitHub account.

That way you have access to it forever.

So if you're wondering how something worked dropped over here in the GitHub repository and check it out.

There's a section that has the various versions the various chapters.

Every single chapter we have a separate saved copy of what we built.

You can go and see exactly what was built.

There's a before and after section.

So that you can compare and see what was done during just that chapter.

Alright, so, be sure to grab this.

And, and get it yourself.

We're ready to go.

You could start from scratch and follow along.

But like I said, at some point, you'll need, like the data and stuff to generate, say, the database.

|

|

|

show

|

1:15 |

Now, I've tried to structure this course so it's easy for you to follow along.

It's great to have a bunch of building blocks.

Here's how you do routing.

Here's how you do templates.

Here's how you talk to the database.

And then say well go forth and you can put your building blocks together.

Good luck.

But it's way more fun to build them up and have something you've created over time.

So, I've tried to design this course in a way that you can do that and if you get off track you can sort of reset.

I talked previously in the other video about having a before and after code for each section in the GitHub repository.

Let me show you that right now.

Here we are in the GitHub repository.

If you go over here to the source section you'll see it doesn't sort it really great numerically but for each chapter that we've written code we don't actually write code until Chapter 4 but for each one we have a separate section.

You'll see that say Chapter 5 is based on Chapter 4 code.

Chapter 6 is based on Chapter 5 code.

Things like that.

So if you want to follow along with the routing part you come over here and you can see we have this start section.

This is the code that we typed in that I typed in when we started Chapter 6.

This is the result of all the work we did during Chapter 6.

So if you want to say start from where we were in Chapter 6 and then do the routing work yourself just make a copy of this folder and start going.

Of course, this will become the starter code for Chapter 7 and so on.

We talked about in the course how to get one of these loaded up and running.

How do you create the virtual environments and register the packages and stuff like that that you need?

So, we'll get to that in due course but just know that we have these sort of before and after codes for each chapter and ideas that hopefully that helps you follow along if you get lost or something along the way just grab the starter code from a particular section or you can go back and sort of diff the two directories and see what changed.

|

|

|

|

1:55 |

|

|

|

22:25 |

|

|

show

|

3:41 |

Now this is obviously a course on Pyramid, so we're going to choose Pyramid as our web framework for this course, but let's talk through the various considerations and some of the trade-offs that you make with the web frameworks in Python.

The best way to think about these frameworks is as a spectrum and on one end we have what I refer to as the building block frameworks.

These are things like Django.

Now what do I mean by building blocks?

Well, they're really large sections of functionality you can just drop in or turn on and off.

For example, if I want an Excel-like editing experience for all of my database, all my tables in my database, with Django I can just drop that in.

I can just turn on a thing and, boom, you have Excel-like editing or maybe Google spreadsheets, more accurate because it's in the web, but you have this sort of big things you can drop in.

You want to a CMS, drop in the CMS.

You want user management, you drop that in there.

It comes with a built-in data access layer.

This is all a positive thing in a sense that you get these great pieces of functionality.

The drawback, and to me just personally, this is a pretty big drawback, is if you don't like the way those things work, if you don't to follow the prescribed way of doing things that Django offers you, well, too bad.

Django's going to make it much harder for you to be different or to choose what I think of as the best of breed building blocks.

Do you want to use, say MongoDB and Redis in this unusual way, you want to create your own little management backend, things like that?

Well, then you still have all the baggage of Django, but none of the benefits because it doesn't work that way.

You're fighting against the system.

If you would rather work that way, what you probably want is a microframework.

We have one in our framework called Bottle out there, super, super lightweight.

It doesn't do a ton, but it basically says, "Here's HTTP request, what do you want to do with that?" That's more or less it.

Probably the most popular microframework would be Flask.

Flask is very well known and used a lot.

Flask is a fine framework.

Probably my second favorite of all of them.

Flask is good.

It's a little more common to use Flask to create web services than it is to create web applications, so HTTP services rather than a bunch of pages, but of course Flask is used for that as well.

I'd say Flask offers a little more functionality than Bottle and then we have Pyramid, which has a little bit more support for building full-on web applications than say Flask comes with, in my opinion.

There's some benefits.

There's some drawbacks.

Some of the benefits of Pyramid include, it has better Python 3 support, it has better template support, it's faster, couple of other things.

We'll maybe talk about those.

It really comes down to the trade-off of do you want a microframework or do you want one of these big building block frameworks like Django, then the difference between, say, Flask and Pyramid is actually much, much smaller.

What you learn in one is largely applicable to the other.

We're going to see that Pyramid being a microframework will allow us to use something like SQLAlchemy and then if we decide, no actually we'd rather switch to MongoDB, no problem.

That's a super, super minor change and everything else will just keep working.

That's the really nice stuff of a microframework, real nice features of a microframework is that it let's you pick the various pieces and put them together just the way you want, not the way the framework wants you to or the few choices that framework has made for you.

|

|

|

show

|

4:13 |

Let's spend a moment on the Pyramid philosophy and some of the principles that they use to drive their project.

I love their tagline: The start small, finish big, and stay finished framework.

You'll see that the principles really drive that mission statement there.

First of all, they focus on simplicity, and the pay-only-for-what-you-eat approach.

And what that means is they've designed the framework so that you can get results even if you have only a partial understanding of Pyramid.

You don't need to understand all the stuff in order to make use of it.

You just need to know how to, say, create a view and a template and pass data to it.

That's really all you need to know if you just want to show simple data, things like that.

So they've built this start small and add your understanding to do more and more advanced stuff over time but if you don't care about it, you don't need to know about it, and that's really nice.

Being a microframework, they focus on minimalism; the core things that web applications do: mapping URLs to code, templating, security, and static assets.

And that's pretty much it.

Every web application has to do stuff like this, so that's what they've focused on, doing this really fast and really well.

Because Pyramid's relatively small, they can focus on having documentation for everything.

So their goal is to make sure nothing goes undocumented.

And I could tell you, I've submitted a couple of PRs back to various aspects of Pyramid and a lot of the conversation actually has been, well, what are the effects of this change on the documentation, on the tutorials, and making sure that that's always up to date.

Maybe even more so than, is this the right change for the framework?

So I can tell you they take documentation super seriously.

We talked about focusing on the core things the web framework does, routing URLs, serving static assets, and so on, and I can tell you that Pyramid is one of the fastest Python web frameworks out there.

It's super fast, really runs well, and it runs well on Python 3.

Some of the frameworks out there, you'll see they run pretty fast on Python 2 but not on Python 3, and nobody wants to be in that place these days, right?

Python 2 is on its way out, so having this run fast on Python 3 is really, really awesome.

I can give you a little bit of background just running Pyramid for my web applications, both the podcast website and the training website run on Pyramid.

I've had various things of those sites each featured on Hacker News, and be on the front page, in the top five or top 10 on Hacker News for a day or two, and that sends a ton of traffic over.

You go over to the web server, pull it up, it's like a $5/10 web server, virtual machine, and it's doing like 5/10% CP usage even though it's just getting hammered with all this traffic.

So this framework is super fast and easy on the hardware, and really, really scales in some impressive ways.

We obviously want our web frameworks to be reliable, and it's really encouraging that every release of Pyramid has 100% statement coverage for their unit tests.

That's awesome.

Pyramid is open source and released under a permissive license.

You can see your github.com/pylon/pyramid, and you can go check it out, download it, clone it, do whatever you want so you'll always have access to your web framework.

One of the first things that drew me to Pyramid was actually it's super strong support for Python 3.

So they made a statement early on saying, Python 3 is the future, Python 3 is going to be our default implementation.

Yes, it may still run on Python 2, but we are going to build all of our demos, build all of our examples, and primarily test and develop on Python 3, and make sure things run fast on Python 3.

Which they've done really well, and that's awesome.

You definitely need to be on Python 3 these days, Python 2 is on its way out really, really soon.

At the 2020 deadline, it's basically going unsupported and you do not want to be built on a framework or a runtime that's unsupported.

So the fact that Python 3 is the default implementation for Pyramid is just excellent.

|

|

|

show

|

3:31 |

It's time to take a quick tour of the various building blocks or the concepts that we use in Pyramid to build our web applications.

But what are these building blocks?

Everything starts with a route.

When we get a request into the web server, we're only given a URL and the URL has to be mapped over to some sort of behavior.

And Pyramid is a MVC, model-view-controller framework, which means we need to come in and figure out which function should that map to which controller.

And then let it process that and return whatever view it decides makes sense, okay.

So the first thing that we're going to do is to define a set of routes or pattern-matching on URLs to figure out where does that request get handled within our application.

Then we'll have, what Pyramid calls views, but I'd prefer to think of as controllers because of the MVC nature.

And these are either methods or they can be classes that process our requests.

In our course, we're going to stick to the method style of working, but think of it as just something that you can call some function or method that can be called to actually handle the request.

Here's the URL.

Here's the data associated with it.

Maybe from the URL itself.

Maybe from a query string.

Maybe from a POST.

Take that data and just process the request whatever that means to you.

Once the request has been processed, we need to generate a response.

And, very often, what this is going to be is some form of dynamic HTML.

Maybe you run a podcast and you want to be able to say / is going to show the details for that podcast.

Well, the template itself, the basic HTML stucture's always going to be the same, but the various pieces of data, what is the description, what is the play link and things like that, is going to change.

So we want some kind of dynamic HTML.

See the Pyramid has at least three options on how you can build these.

Three different templating languages you can use.

But really nice support there.

The data that is passed from the controller down to the template, this is called a model.

So this is both data and behavior passed to the view.

And this is typically done in the form of a Python dictionary.

There's also support for static, or cached, assets.

So if you've got CSS, JavaScript images, those types of things, one of the easiest things you can do to make your website seem ultra-fast is to cache those really, really carefully.

So you'll see that maybe, even though you might return, I don't know, 250K of JavaScript and images on a particular page, if your browser caches that, that site is going to get much, much faster after the first request.

Of course, configuration is super important.

The way we want our app to work locally, whenever we're working on it.

The way we want it to work in production.

These are probably very different things.

Maybe different database connections.

Maybe one has outbound email turned on for all the actions.

Like if you click the reset password button for a user, well that's probably the user doing that in production, they want to get an email.

In development, you want to make sure that does not happen.

If you're testing some problem, like I'm interacting with a user that says, "I can't get into my password reset," and you touch that button, logged in as them, you don't want to actually send them a bunch of fake emails.

So you want different configuration settings.

No email in dev.

Real email in production.

Things like that.

So Pyramid has support for that, as well.

|

|

|

show

|

3:15 |

So, let's dig into these building blocks.

First of all, we have our views or controllers.

So, what does that look like?

Well, we start by importing the necessary decorator, from Pyramids, so from pyramid.view import view_config, and then we just define a function.

The name of the function doesn't actually matter, in this case, and then we're going to map...

a route...

to that view.

In order to make a regular function become one of these controller functions, or actions, what we're going to have to do is we put this @view_config decorator.

Usually what you'll find is there's at least two values you set, sometimes it can be omitted, sometimes there's more, we'll talk about that...

Is we're going to set the route name.

This is what URL maps to this function, it's a route name.

And then the template that we're going to use to generate the dynamic HTML, so that's the renderer, we're going to use mytemplate.pt right now.

And then this method always takes a request object.

Which is one parameter request, and that's the way it works.

The request carries with it things like the data passed, and the URL, the form post, all that kind of stuff.

It's sort of the jumping off point to get the data out of all the inbound stuff, and things like cookies, and so on.

We're going to take that data, do whatever it is we got to do, maybe check for an account, maybe pull some stuff from the database, things like that.

And then when we're ready we're going to return our model to the template.

We do this by returning our model as a dictionary and the framework is going to manage getting that moved over to the template and rendered dynamically and responded back as HTML to the browser.

This is about as simple as things get, with views right?

All we do is we have a function, it returns a static dictionary.

Let's look at a more realistic one.

Over here, we have one and we're saying this actually only responds to POST messages.

We're going to reset password and maybe there's one that generates the form but this one is going to do the password reset when they submit that form, okay?

And first of all we're going to get the data that they put into the form, right?

What is your email that you're going to reset your password to when they click the button?

All that data from the form ends up in a dictionary called POST.

Alright, we're going to grab that out...

And then we're going to try to create a password reset using our data access layer.

That may or may not work, but if it doesn't work, then we're going to send them an error saying, "No no, sorry that didn't work," and we're going to render the reset password again, but this time the error message will be shown.

And if it does work then we're going to say, "Alright well it looks like that worked," so send them a message that says, "Check your email for the reset code." Presumably that repository thing actually sent the email.

Not sure that's a great pattern but it fits on one slide, so that's why it looks like that.

Alright, the idea is we call that function, and either it did not send the password or send the email, or if it did it saved it in the database, sent the email, and now they just got to go check their email.

Alright this is a more realistic type of view here processing a form POST.

|

|

|

show

|

2:49 |

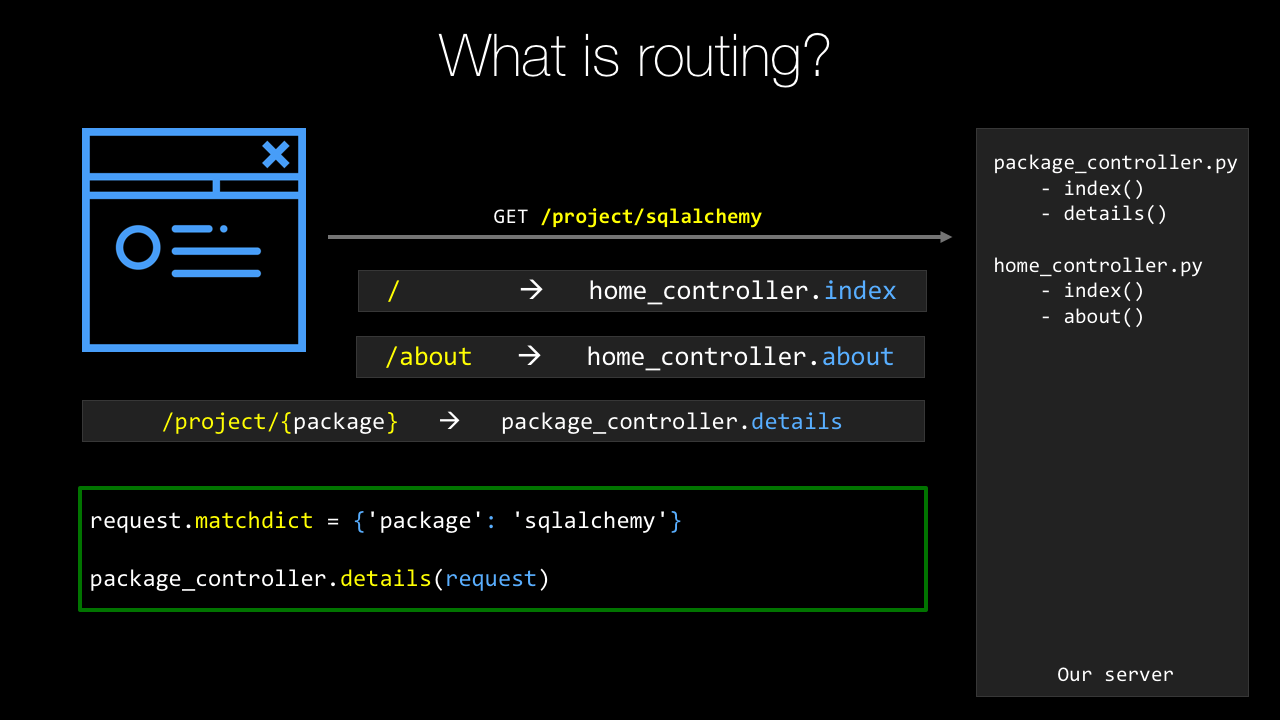

The next major building block to cover is routing, or routing, if you prefer British English.

Routes allow us to define patterns that match some URL and map over to a particular view or controller method.

Sometimes that just says, "This URL calls this function," other times it says, "This URL contains Data," so like /package/request or /package/sqlalchemy would pass the request or SQLAlchemy to that function.

So, let's see how we do this in Pyramid.

So, we're going to go to the main method in the __init__.py file that is basically the entry point or the start up for our web application, or we're going to start with this thing that's a configuration file reader.

On there, it has a function called add_static_view.

So here, we're naming the route static, and saying it maps to /static or anything under there.

So, what we can do is we can go here and say anything in this directory or below it, will be cached by a quick little calculation.

It's one month, so you've got to put an integer that is the number of seconds to cache it for, I like to put this as calculation so I can see 60 seconds, 60 minutes, 24 hours a day, 31 days, okay, that's a month.

Then we're going to define the routes that are the various URLs that go to our action methods.

So the first one is /, just like that, and that's home.

Right, that's just the basically the main page when you hit the site.

And we want to have a /help so add a route for that for help.

Then we're going to have, in our PyPI example, the route that they use for an individual package or the URL is /project/{package_name}.

Now notice, this one is different.

It has package_name as a curly bracket and then a variable looking thing.

package_name will actually be passed to the function that we apply this to.

So, like I explained before, SQLAlchemy, requests, whatever so the URL itself will carry the information along and that's defined in the route here.

Also, we have things like account/login.

And the final action to do is to tell the system to go figure out what functions are associated with these, so we have the route name, we also need to have to have the route to actually apply to this.

So we run the scans and it looks through all the Python files, looks for that view_config decorator using various route names and then it puts those two things together.

And finally, we have to return this WSGI application, W-S-G-I, WSGI, application that the web framework's going to use and this is just at the end of the main startup always.

So this is how you define routes in pyramid.

|

|

|

show

|

1:49 |

Configuration's a super important part of our web app.

Like I described in the intro to this chapter there's certainly behaviors you would like to be different in development than in production.

A real simple is, do you send email notifications to users when you take certain actions?

Well, in production, of course you do that, right?

I want to reset my password, or I purchased this thing.

If you're doing testing locally, especially if somebody comes to you and says, hey, the site's not working for me, I tried this, but this happens, you might want to log in as that user and then try that action.

And obviously you don't want them to get the email.

The easiest way to do this in Pyramid is to have different configuration files, both for development, production, test, whatever the various scenarios you have are.

In fact, when you create a new project, it comes with a development.ini and a production.ini, and in here we have a bunch of settings that Pyramid uses to control itself.

But we can add other settings just by saying key equals value, so db_file=/db/webapp.sqlite, API key equals whatever that thing is.

Notice there's no quotes on the strings, they just come back as strings anyway.

Super easy, we can do that.

Now how do you get to them in Pyramid?

Well, we saw that main startup method, and the settings being passed in there, so that's super easy.

We go to that again.

Now we can go to that config and say get us the settings, this is a dictionary that has those keys and values.

So if I want the DB file, I just say configsettings.get, and there's just standard Python dictionary access.

Get me the thing for DB file, get me the API key, and off you go.

And obviously these can be different in development and production.

|

|

|

show

|

2:03 |

The final building block that we're going to talk about here are templates.

Templates are super important.

They let us define in an HTML-like fashion the general markup for our site and then add little bits of dynamic behavior to it.

There's actually three choices we can use for the template language.

We can use Mako, we can use Jinja2, or we can use Chameleon templates.

This is a Chameleon template here, and I'll talk later about why I think Chameleon is the right choice.

But let's just see how this works.

It's kind of the same for all the template languages.

So what we're going to do is we saw that we passed a model over that had packages, right.

One of the keys in the model was packages, and the value was actually a list of packages.

We're going to use tal:repeat and I'm going to say for every package in packages, I want to replicate this HTML block, including the DIV that has the tal:repeat on it.

So we'll have a DIV containing a hyperlink in a span for every package.

And then for the hyperlink we need to set the URL, so within this little repeat loop we can say p.url, p.name and so on.

And then we're going to show the summary but only if the summary exists.

Like if there's an empty summary or it's not set, then we're not going to show it.

So you can see that if we want to take some sort of text or anything and get a string version of it and just plunk it into the page, we'll use dollar curly expression.

We can do these conditions with tal:condition and so on.

Often when we take this and actually pass that data to it and render it, that pyramid render it, it's going to look like this: requests, Http For Humans, boto3, AWS API and SQLAlchemy and so on.

Cause that was the model of the three packaged requests Boto3 and SQLAlchemay passed along to this template.

So that's what its going to look like.

Unstyled and ugly of course, we're going to work on that later.

|

|

|

show

|

1:04 |

Before we move on from our introduction of Pyramid, I want to give you a little extra information if you want to go deeper.

So, obviously, I run the Talk Python To Me podcast and over at talkpython.fm/3, so Episode 3, you can see I interviewed Chris McDonough, who was one of the creators of the Pyramid Web Frameworks, who, if you want to hear a conversation going deep inside from the creator of Pyramid himself, well, then, drop over here and you can have a listen.

It's about an hour long.

Similarly, we're going to talk about SQLAlchemy, and over at talkpython.fm/5 I interviewed Mike Bayer, who is the creator and maintainer of SQLAlchemy.

Also, similar story, go deep inside and learn the history and the features of that framework.

Obviously, I'll tell you everything I think you need to know to build the web apps and do the data access throughout this course, but if you want to get a little bit deeper and learn the personalities behind all this stuff, then definitely check out the podcast.

|

|

|

|

20:34 |

|

|

show

|

2:44 |

I don't know how you feel, but I think there has been enough talking.

It's time to write some code and start creating some software.

So, that's what this chapter kicks off.

We're going to create our first project.

And we'll see that there's actually a couple ways to do this either with a command-line interface which applies everywhere or a simplified, shorter way with PyCharm.

I'll show them both of you.

But let's talk about how we get started in Pyramid in general.

So, every web framework has its own style of scaffolding or the putting the structures in place so you can get started.

So, here, what we're going to use is a thing called Cookiecutter.

So, Cookiecutter is an arbitrary Python utility that takes starter projects for all sorts of things for Pyramid web apps but also for Flask and Django, and even old Atari-type things written in C and you can create projects from them and sort of get a headstart on that structure.

So, we're going to use the Cookiecutter packages for Pyramid to do this.

This is the official way recommended by the Pyramid folks.

Once we've installed Cookiecutter just pip install cookiecutter, we're going to use it to create a site.

Now there's several different types of templates we could use.

Within those projects we have choices like what templating engine do you want to use, or things like that.

So, there's a lot of flexibility here but we'll just start with what they call the starter site.

We're going to create a virtual environment.

This typically, the virtual environment you want that to reflect what you're running on the server what your target environment is and the way to control that really carefully is have a dedicated separate Python environment for your web app.

So, we'll do that here.

Not required, but very much recommended.

And then we're going to register the site as a package.

Pyramid's decided that their web applications are best deployed as packages and manages packages.

So, we'll see that there's an extra command that you have to run here and say "Here's the package that I'm working on, "install it into this Python environment." Not much to do but it's easy to forget so I'll show you how to do that.

And finally, we're ready to run our application.

We're going to serve it up, interact with it.

We'll add some features, and then we're going to go back serve it up again, test it and we'll just keep adding and adding and iterating.

So, these first four steps, we're going to do to get started and of course, you just build software over time by adding feature after feature after feature.

And so as I said in the opening, we can use PyCharm or we can use the command-line.

First, let's talk about a way of doing this that works for everybody no matter what OS you're on no matter what tooling you're using.

This command-line interface path will work for you.

It takes a couple steps but once you get used to it it's no big deal at all.

|

|

|

show

|

7:54 |

Alright, we're going to begin creating our project by simply opening up our terminal or command prompt if you're on Windows.

Want to going to go to the right location.

So over here in our demos, this is the Git Repository that you all have access to created a thing called Chapter Four: First Site.

For each chapter I'm going to have a starter and a final bit.

So, starters whatever we happen to start with.

Later on, we're going to start with our app making major steps.

Like one point, it'll have a design added to it.

At one point, it'll have SQLAlchemy data access added to it.

We want to start from that point on the next chapter so we'll kind of move along so you always can kind of catch up or start from the same place that I'm starting from at any given chapter.

So over here we're going to create our site in this final and the starter one, there's nothing to do yet.

We didn't start from anything.

This is from scratch so we'll just have a blank folder there.

So let's change directories over here.

In this, you'll see I have oh-my-zsh.

It's detected this is a Git repository.

It doesn't really matter if your shell does that or not.

So what we want to do, is we want to make sure that we have Cookiecutter installed so that we can run the various templates.

So we'll say pip, maybe pip3, just to be safe.

Install, it will ask for an upgrade just in case and I'll say --user cause we find it just installs in my user profile.

I'm not a machine, which means we don't require sudo.

Then finally, cookiecutter.

I should already have it.

Looks like everything's up to date.

Yours might have installed it.

You can always just type cookiecutter and see what you get, right?

Something like that.

It shows you where it comes from.

Okay, excellent, we already have this installed and ready to go.

So step one, make sure we have Cookiecutter installed.

We have that.

Step two, will be to use one of the Cookiecutter templates.

Now if we're over on the Cookiecutter site on read the docs, you'll se that there's this thing called pantry full of cookies; cookie cutters.

And there's tons of different things.

So heres a flask thing, heres a bottle heres a pyvanguard; whatever the heck that is.

If you look for a pyramid there's a whole section on these templates.

So here are a bunch that come from the pyramid team and this one down here I actually added myself for the Python for Entrepreneurs course.

The one we're going to use is this pyramid cookiecutter which is just a Git repository here.

So we can just take the root of the Git repository and we'll just come down here and say cookiecutter and give it the full URL to the repo.

Not .git, not that, just the page basically.

It says we've already gotten this before can we get a refresh version, yes.

It's going to ask some questions here.

It's going to say, "What is the name of your site?" We're going to call this PyPI cause that's going to be the name of our site.

Let's call this Python Package Index.

Alright, that's going to be our demo throughout the whole class here.

It's going to say the name that we're going to use in the Python Package in the folder structure stuff "Is Python Package Index okay?" Let's go with PyPI; something a lot shorter to type.

That says, "Great, we're almost ready.

"What template language do you want to use?" Default to Jinja2, use to default Chameleon.

We're going to use Chameleon.

You can make another choice if you want.

I'll tell you why later why I think Chameleon's the right choice but you can pick any one of these three.

Hit okay, and it's gone and created a various project structure over here so we could type tree and see what we got.

It's created this folder which because you can see it's got all this kind of stuff.

It's has a setup.py.

This is actually a Python package and here's the implementation of it.

It has the same name always.

Then there's a __init__.py for the package.

Then here's our pews, here's our templates here's our static files, and so on.

We can go up here and see it says "All right you can go in here "and what we want you to do is go into that folder "and create a virtual environment." So, we'll do that.

They want to create the virtual environment.

You can name it a lot of things.

.env, or venv, is one of the best choices if you're planning on using PyCharm cause it will automatically detect those.

I'll make it more explicit and say venv.

Now the next thing to do, and so we ask "which Python" and Windows asks "where Python" it'll say, "It's the system one." We want to do is use this one, so we need to activate it so we're going to run that.

Notice my prompt changes here and if I ask the same question I'm getting the one that I just created.

So that's good.

On Windows you don't say source and this is scripts; venv\scripts\activate.bat.

Okay, so now we have our virtual environment activated.

It's created in the directory that is the top level of the package; good.

Now all we have to do is to basically register our package and we can run it, register our website.

We do that by saying Python setup.py develop.

Now normally you would run an install command here.

What we want to do is tell Python to leave these files in place and just let us edit it here so we don't have to ever rerun this command again.

It'll just reference this local working location rather than copying it over to what's called site packages.

So we do this, it's going to install all the dependencies and get everything setup so we can run it.

All right, great, it's installed everything.

Now we can ask what is installed by saying "pip list" and we've got a bunch of things.

We've got pyramid and the underlying template language installed.

We even have this full local location installed with the package PyPI which is our web application itself.

One thing that's kind of annoying about virtual environments in Python is they always install out of date versions of both setuptools and pip.

So, go ahead and update that real quick too.

There's only 11 versions out of date.

What's wrong with that?

Okay, so we should be able to run our web app and go interact with it now.

Let's see "pserv" and we see if that exists.

Looks like it does.

That got installed when Pyramid got installed, okay?

And that Pyramid got installed when we ran the setup.

Then what we're going to give it is.

Let's just look around really quick.

We're going to give it either the development.ini or the production.ini.

Here we want to give it the development one so pserv development.ini.

There's a small chance this will fail.

There's this weird behavior sometimes that the package in the virtual environment doesn't get registered exactly right unless you activate, deactivate, and then reactivate it.

Fingers crossed this works, let's see.

Perfect, okay if it doesn't just deactivate the virtual environment reactivate it, and then you'll be totally good.

All right, so now if we go throw that into our web browser we should be golden.

Let's see what we get.

Ta-dah, the Pyramid Starter Project is up and running and this is the Python Package Index Pyramid Application generated by Cookiecutter; hooray.

So, we now have this basic structure over here.

If we look at it, whew a lot more stuff going on now that we have that virtual environment stuff registered.

Up here's what matters.

This is all the package installed locally and then the virtual environment.

This is what you really care about, right?

We've got our package here.

We've registered it and now it's running.

We can go edit our template to change this look and feel of our CSS.

We can go change our view to have different behaviors or to have additional URLs and so on.

All right, so we're up and running with Pyramid on the command line.

|

|

|

show

|

2:40 |

So you've seen how to create a Pyramid website using Cookiecutter, virtual environments and what not on the command line.

Let's review those steps so that you have a nice, clear series to follow.

Okay, so, what do we do?

We started by sayin' we're going to use Cookiecutter and we're going to need a template to work with.

So I use the Pyramid Cookiecutter starter template.

Just copy that URL and we're going to feed it to Cookiecutter,.

You can use any one of the five actually if you feel they match what you're doing better.

So we got to make sure we have Cookiecutter installed.

So pip install cookiecutter maybe throw a -U for upgrade a --user so it doesn't require sudo things like that.

But here we go it's going to get it going.

That's great.

We pip install cookiecutter and then we're going to create the scaffolding.

We want to create the site using Cookiecutter.

So we'll say cookiecutter and we'll give it the URL to the template.

It's going to ask a bunch of questions.

If you want to follow along make sure you choose 2 here for your project otherwise there's going to be trouble.

Alright, great, so you choose that you answer the various questions and it gives you a few steps to follow after that.

It says go to the project, create a virtual environment.

Make sure you upgrade pip inside of tools because Python doesn't do that for you.

How frustrating but that's the way it goes.

And then, once you've registered the project we'll be up and running and then you can serve it up.

Oh, what's that look like?

Well, we're going to run Python3 -m venv.

Some versions of Mac and Python intersected with PyCharm all require this --copies.

If you have the latest version of everything you don't need it but you can throw that on there if you're finding you're having trouble.

So we're going to create our virtual environment and we can activate it.

Say source .env/bin/activate on Mac or Linux on Windows it's just .env\scripts\activate.bat and then you can ask well which Python or where Python should be the one in your virtual environment.

Then final step is to install the dependencies install Pyramid, and to register the site with Python.

So Python setup.py develop.

Everything should be up and running and working.

It's just time to serve up your app.

Open the web server and start interacting with it.

So pserve and give it one of your configurations probably development.ini.

What'd you get?

Well you get your site up and running of course.

So really nice, here we are on the close looking at our starter project just like we did in the demo.

|

|

|

show

|

0:34 |

You've already seen how to create a pyramid website from the command line interface and like I said, that works everywhere.

But if you happen to be using PyCharm Professional this gets a whole lot simpler.

So, this does not work on the Community Edition one of the differentiators between the paid and Community Edition of PyCharm is this web development stuff, so really for this course the professional one is the only one that makes sense.

But if you have that, you could skip a lot of this and go ahead and just do it in PyCharm.

So, let's see how that goes.

|

|

|

show

|

2:33 |

Over here in PyCharm, let's go create the same type of app that we did on the command line interface but this time using PyCharm.

Here you can see we don't have any recent projects it's just basically bare.

So we'll say "Create a New Project" and let's use Pyramid, okay great.

It says we're going to create a new virtual environment and it's going to create it in the folder, doing exactly exactly what we did, so we don't have to expand that out.

And we want to set the location so let's go and say this is on desktop just call this "PyPI Web" or something like that, okay.

And we can also, this is important come down here to more settings.

Remember there are the different templates?

There they are again, and this is going to use basically the same thing.

And let's go and switch that to Chameleon from Jinja2.

Okay so we're ready, we're going to do all the stuff of running the Cookiecutter: it's going to install Pyramid it's going to run the right template for us create the virtual environment, activate it all those things, ready?

Go.

They're just creating the virtual environment.

Now it's installing Pyramid.

So if we look over here, we've got our PyPI Web and everything is pretty much up and running.

I'm not sure it's going to totally work yet, notice it's doing some indexing down here, give it just a moment.

PyCharm knows that this project is not installed into the virtual environment.

You can see if you just click on this terminal and all which virtual environment it is you can ask again which or where Python and it's this new one that we just created and it knows in here that this is not set up.

So it knows we need to run Python setup.py develop or I can just click that, and it's doing it for us.

See up here above it also says these packages are required but that setup action actually installed those packages so it kind of solves two problems at once.

Alright, now how do we go run our app?

We don't have to type pserve develop, we just press this.

and let's click it and see what happened, are we ready?

Ta-da, same thing.

Our starter template has been used to create a new Pyramid project, this time we just called it PyPI Web it was generated not by Cookiecutter, but by PyCharm.

Really cool, pretty much exactly the same thing but a whole lot simpler.

All we had to do was click Create New Project, Pyramid give it a name, and then click, it will go ahead and set up this package to be run and edited.

|

|

|

show

|

1:24 |

Let's review creating Pyramid web apps with PyCharm.

So remember, we just go new project, and we pick Pyramid and then we pick a new virtual environment.

We set the location, which basically names our website.

And make sure you expand out the more settings and choose Starter and Chameleon.

So Pyramid, virtual environment, Starter and Chameleon.

Basically, it solves a whole bunch of those things we did at once.

And then the final thing to do is to run the setup.py task in development mode.

Now, if you don't get that little dialogue that says hey you need to run it this way you can go to tools, run setup.py task and just type develop.

But the newest version of PyCharm typically finds that and you just click that little bar that comes up across the top.

Then you're ready to run your app.

How do you run it?

Well, you click that little play button and it runs, and then you go interact with it.

Easiest way to do that is just to click the hyperlink at the bottom and boom, your site's up and running.

So this is really a nice way to create new projects.

Honestly, I don't use it very often these days.

When I was new to Pyramid, I would use PyCharm to create those projects and it helped a lot.

The more I got good at working with Pyramid directly I find myself going to the command line more and sort of doing stuff manually.

But either way is totally fine this is certainly a good way to get started because it helps you through a lot of the steps and it makes it real, real simple.

|

|

|

show

|

2:45 |

Let's take a quick tour of the directory structure, or the file structure that we have for our pyramid project so you can see what, how they've organized it and what all the pieces mean.

Regardless whether you've created this in PyCharm or you've created it using Cookiecutter on the Command line you'll get the same basic output.

So over here, we'll have our directory here it's called make_your_project.

This is basically the top level package that represents our website.

So in here we have a static directory.

This is where CSS, JavaScript, and Images go.

You don't have to drop them all flat in there, right?

That's how the templates work but I would create a css folder, an images folder a javascript folder, maybe even more than that.

So any, sort of, directory structure you want to create underneath there, it's totally fine.

Then the dynamic HTML templates live in templates.

And PyCharm colors them purple but there's nothing really special about that directory.

When our app starts up it's going to run this __init__.py and there's a main method in there that gets executed.

So that is the entry point for your app that's where all the initial setup goes where we register the route and maybe we read the database configuration and get it all set up, things like that.

We're going to talk about how to test your web applications and one of the real big advantages of Pyramid is it's super, super testable.

We'll talk about how to do that and it's really, really nice.

And finally, where your views or controller methods go this is right now, a single views.py file.

That might be okay for a really simple app but in practice, we'll want more organization for our tests and more organization for our views, even templates.

So, consider this the starting point but as we build this up, I'll show you ways where you'll probably want to reorganize things as if you know, if this is going to be a real major application.

That's how it starts out, just a test file and a view file.

Now, this .egg_info thing, this is an artifact from running Python setup.py develop and it's needed for Python to do its magic but you can just ignore it, okay?

So it's going to be there, but just ignore it it's part of the register of this package for development mode.

We have our two project settings and configuration files.

We've gone over this a few times but development.ini, that's what you want for dev production for production, probably, things like that.

And that's really all you need to mess with.

Maybe setup.py, maybe?

If you're going to add a dependency or something like that in there but this is a starting place.

So you know how and where to go look for what you need.

Typically you're going to be in __init__.py in views, in templates, dropping stuff into static.

|

|

|

|

35:11 |

|

|

show

|

1:19 |

When we created our project our website with Cookiecutter you saw it ask a question.

What template language would you like to use?

Would you like to use Jinja2, Chameleon, or Mako?

So let's look at these three template languages all of which are totally viable choices for our web app and compare them and sort of see what the trade-offs are why you might choose one over the other and so on.

So like I said there's these three options.

We have Jinja2 and I would say really this is the most popular one of these.

It's very much the default for Flask and now it is also become the default for Pyramid.

So being the default means most people pick that and I believe Flask this is the only one that ships with it.

So that really tilts the scale in it's favor if you will.

There's also Mako.

This is probably the least popular.

This is the one I've seen the least of I guess but maybe I'm just not looking in the right places.

But Mako and Jinja2 are pretty similar.

And then Chameleon we've looked at Chameleon.

And below you see the three packages that you have to install only one at a time for enabling that language in your web app.

So pyramid_jinja2, pyramid_mako, or pyramid_chameleon.

And if you pick the various options in that Cookiecutter template it will choose for you.

|

|

|

show

|

4:38 |

So, let's take a simple but fairly realistic example to compare these three template languages.

Okay so the idea, the goal will be given a list of category dictionaries with an image, name an image and a name on each one of them display them in a responsive grid.

In this example we're going to be working with this data and these are actually from a bike shop so there's different categories of bikes: comfort, speed, hybrid, and each one has a category picture we won't go into the details, right, this is not our main demo and stuff, but pretty simple example.

We're going to return this data to the template and our goal is to turn that into HTML showing the picture in a responsive grid type of layout.

We want it to look something like this.

We're going to do this in each of the languages and see what the various moving parts look like.

First up, Jinja2.

So you can see with Jinja2, every time you have a statement on a given line, you open it with {% so {% if not categories %} So if there are no categories past this view we want to just show no categories rather than like a blank page.

But if there are categories, we want to loop over each one of them and print out or add to the DOM a div and within that div having the image within a hyperlink and the category within a hyperlink.

So a couple things to note there is a lot of open close, open close, open close notice at the end we have {% endfor %}, same with endif.

That seems unnecessary and annoying but the thing that I think is not great about Jinja or Mako you'll see is the same is this is not valid HTML.

I can't take this and open it up in an editor that does not understand Jinja and have it think it's okay, right I can't hand this to a designer and have them use their tools on it.

But okay, let's put that aside for just a minute what else have we got here?

So we do {{ }} to get the value out, we want to get the name, and we want to print the lowercase out.

That seems great, there's nothing wrong with that that seems totally beautiful it's as good as it's going to get.

But this {% endfor %} and the fact that it's not valid HTML Eh, I'm not loving it.

Alright so that's Jinja2 let's look at how it looks with Mako.

Super similar, a little bit less syntax, it's not {% if not categories %} but it's % and then one line of Python including the colon.

You do still have the endifs and the endfors and so on.

Now, in Mako the values that come in as dictionaries you have to treat them as full-on Python dictionaries you can't do your attribute access, you can't say c.name you got to c.get(name).lower(), maybe if you want the default value that's not none to be a little bit safe here.